Micron Reports on GDDR5X Dev Progress - Volume Production This Summer

by Anton Shilov on February 9, 2016 11:30 AM EST

Engineers from Micron Development Center in Munich (also known as Graphics DRAM Design Center) are well known around the industry for their contribution to development of multiple graphics memory standards, including GDDR4 and GDDR5. The engineers from MDC also played a key role in development of GDDR5X memory, which is expected to be used on some of the upcoming video cards. Micron disclosed the first details about GDDR5X in September last year, publicizing the existance of the standard ahead of later JEDEC ratification and offering a brief summary of what to expect. Since then the company has been quiet on their progress with GDDR5X, but in a new blog post they have published this week, the company is touting their results with their first samples and offering an outline of when they expect to go into volume production.

The GDDR5X standard, as you might recall, is largely based on the GDDR5 technology, but it features three important improvements: considerably higher data-rates (up to 14 Gbps per pin or potentially even higher), substantially higher-capacities (up to 16 Gb), and improved energy-efficiency (bandwidth per watt) thanks to 1.35V supply and I/O voltages. To increase performance, the GDDR5X technology uses its new quad data rate (QDR) data signaling technology to increase the amount of data transferred, in turn allowing it to use a wider 16n prefetch architecture, which enables up to 512 bit (64 Bytes) per array read or write access. Consequently, GDDR5X promises to double the performance of GDDR5 while consuming similar amounts of power, which is a very ambitious goal.

In their blog post, Micron is reporting that they already have their first samples back from their fab - this being earlier than expected - with these samples operating at data-rates higher than 13 Gbps in the lab. At present, the company is in the middle of testing its GDDR5X production line and will be sending samples to its partners this spring.

Thanks to reduction of Vdd/Vddq by 10% as well as new features, such as per-bank self refresh, hibernate self refresh, partial array self refresh and other, Micron’s 13 Gbps GDDR5X chips do not consume more energy than GDDR5 ICs (integrated circuits) — 2–2.5W per component (i.e., 10–30W per graphics card), just like the company promised several weeks ago. Since not all applications need maximum bandwidth, in certain cases usage of GDDR5X instead of its predecessor will help to reduce power consumption.

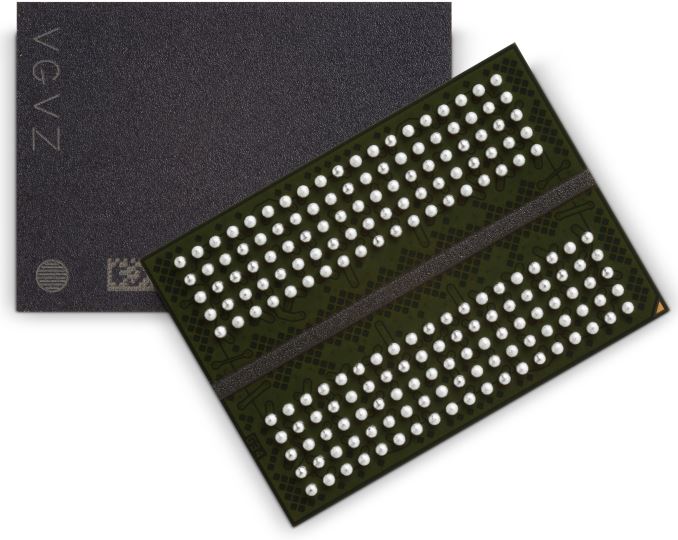

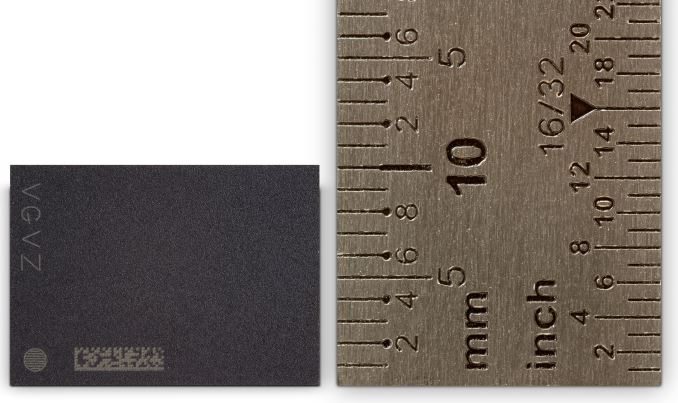

GDDR5X memory chips will come in new packages, which will be slightly smaller (14×10mm vs. 14×12mm) compared to GDDR5 ICs despite the increase of their ball count (190-ball BGA vs. 170-ball BGA). According to Micron, denser ball placement, reduced ball diameter (0.4mm vs. 0.47mm) and smaller ball pitch (0.65mm vs. 0.8mm) make PCB traces slightly shorter, which should ultimately improve electrical performance and system signal integrity. Keeping in mind higher data-rates of GDDR5X’s interface, improved signal integrity is just what the doctor ordered. The GDDR5X package maintains the same 1.1mm height as the predecessor.

Micron is using its 20 nm memory manufacturing process to make the first-generation 8 Gb GDDR5X chips. The company has been using the technology to make commercial DRAM products for several quarters now. As the company refines its fabrication process and design of the ICs, their yields and data-rate potential will increase. Micron remains optimistic about hitting 16 Gbps data-rates with its GDDR5X chips eventually, but does not disclose when it expects that to happen.

All of that said, at this time the company has not yet figured out its GDDR5X product lineup, and nobody knows for sure whether commercial chips will hit 14 Gbps this year with the first-generation GDDR5X controllers. Typically, early adopters of new memory technologies tend to be rather conservative. For example, AMD’s Radeon HD 4870 (the world’s first video card to use GDDR5) was equipped with 512 MB of memory featuring 3.6 Gbps data-rate, whereas Qimonda (the company which established Micron’s Graphics DRAM Design Center) offered chips with 4.5 Gbps data-rate at the time.

The first-gen GDDR5X memory chips from Micron have 8 Gb capacity, hence, they will cost more than 4 Gb chips used on graphics cards today. Moreover, due to increased pin-count, implementation cost of GDDR5X could be a little higher compared to that of GDDR5 (i.e., PCBs will get more complex and more expensive). That said, we don't expect to see GDDR5X showing up in value cards right away, as this is a high-performance technology and will have a roll-out similar to GDDR5. At the higher-end however, a video card featuring a 256-bit memory bus would be able to boast with 8 GB of memory and 352 GB/s of bandwidth.

Finally, Micron has also announced in their blog post that they intend to commence high-volume production of GDDR5X chips in mid-2016, or sometime in the summer. It is unknown precisely when the first graphics cards featuring the new type of memory are set to hit the market, but given the timing it looks like this will happen in 2016.

Source: Micron

17 Comments

View All Comments

eddman - Tuesday, February 9, 2016 - link

1080/1070?! No way. 1800, 1700, 1600, etc. or they might even skip it and go to 2X00.IMO:

GP100/110: HBM2

GP104: HBM2 and/or GDDR5X

GP106: GDDR5

GP107/8: some GDDR5, mostly DDR4; maybe DDR3 on the lowest of the low cards.

Samus - Wednesday, February 10, 2016 - link

I don't think they will phase out GDDR5 at all. There are dozens of architectures still built for it and considering all the reworking that must be done to setup a PCB for GDDR5X, I suspect only new products will use it. That means all current architectures like Maxwell and GCN 1.1/1.2 will continue on GDDR5, and they will be around for at least the next few years in mid-range products.Don't forget NVidia is still producing Kepler and Fermi cards, many years after they were architecturally replaced by Maxwell 1/2.

nightbringer57 - Wednesday, February 10, 2016 - link

GDDR3 has disappeared quite a while ago. Most entry-level cards (and older mid-level ones) have been using DDR3 instead, which has become the de facto standard in computers and mobile devices (DDR3/DDR3L/whatever derivates are similar, but GDDR3 actually is a bit different).So you can expect a shift from DDR3 to DDR4 in the entry-mid level models as the relative prices of DDR3 and DDR4 shift around, although due to TTM and other issues, you won't see a complete shift to DDR4 before a little while after it has become cheaper.

eeessttaa - Tuesday, February 9, 2016 - link

I was wondering if someone from anandtech could write an article comparing the benefits and disadvantages between gddr5x and hbm2. Love you're guys articles keep it upA5 - Tuesday, February 9, 2016 - link

From an end-user perspective, it is fairly simple.HBM2 is faster, enables smaller card designs, and costs more, while GDDR5X is an improvement over current GDDR5 in every way while being cheaper and slower than HBM2.

Valantar - Wednesday, February 10, 2016 - link

Also, HBM greatly reduces power consumption, while GDDR5X only reduces it very slightly.Maxbad - Sunday, February 14, 2016 - link

Seems like GDDR5X will not be suitable for value-oriented cards and they will be stuck with GDDR5 for quite some time. For high-end cards HBM2 offers too many advantages over GDRR5/X in terms of size and speed and prices of the technology are only bound to go lower with time. On the other hand, GRRD5 still offers good enough bandwidth for modern games as shown by GTX 980 ti and with the die shrink value-oriented cards can offer more than acceptable performance with the old memory technology. I don't think that AMD and NVidia will spare resources to implement GRRD5X if they can get away with it and push for long-term replacement of GDDR5 with HBM2.