The AMD Radeon RX 480 Preview: Polaris Makes Its Mainstream Mark

by Ryan Smith on June 29, 2016 9:00 AM ESTThe Polaris Architecture: In Brief

For today’s preview I’m going to quickly hit the highlights of the Polaris architecture.

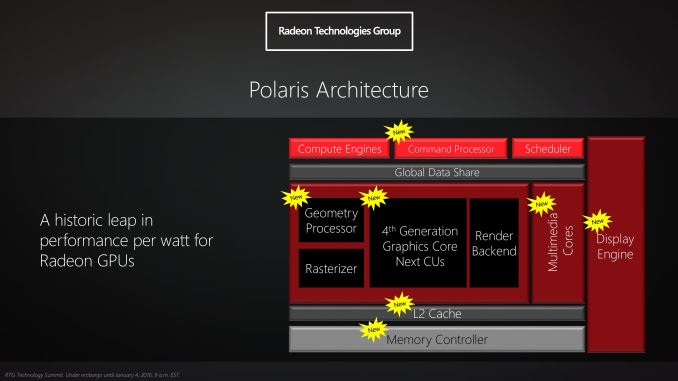

In their announcement of the architecture this year, AMD laid out a basic overview of what components of the GPU would see major updates with Polaris. Polaris is not a complete overhaul of past AMD designs, but AMD has combined targeted performance upgrades with a chip-wide energy efficiency upgrade. As a result Polaris is a mix of old and new, and a lot more efficient in the process.

At its heart, Polaris is based on AMD’s 4th generation Graphics Core Next architecture (GCN 4). GCN 4 is not significantly different than GCN 1.2 (Tonga/Fiji), and in fact GCN 4’s ISA is identical to that of GCN 1.2’s. So everything we see here today comes not from broad, architectural changes, but from low-level microarchitectural changes that improve how instructions execute under the hood.

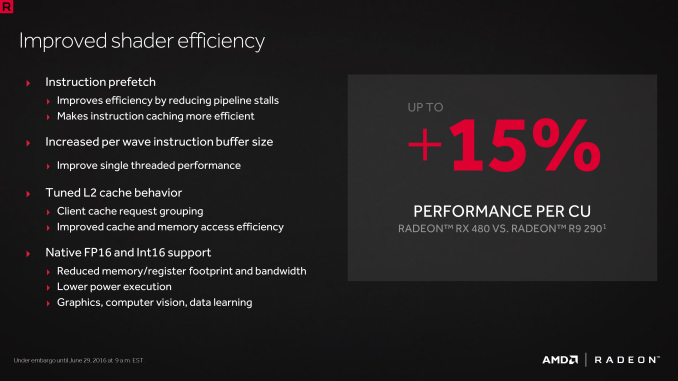

Overall AMD is claiming that GCN 4 (via RX 480) offers a 15% improvement in shader efficiency over GCN 1.1 (R9 290). This comes from two changes; instruction prefetching and a larger instruction buffer. In the case of the former, GCN 4 can, with the driver’s assistance, attempt to pre-fetch future instructions, something GCN 1.x could not do. When done correctly, this reduces/eliminates the need for a wave to stall to wait on an instruction fetch, keeping the CU fed and active more often. Meanwhile the per-wave instruction buffer (which is separate from the register file) has been increased from 12 DWORDs to 16 DWORDs, allowing more instructions to be buffered and, according to AMD, improving single-threaded performance.

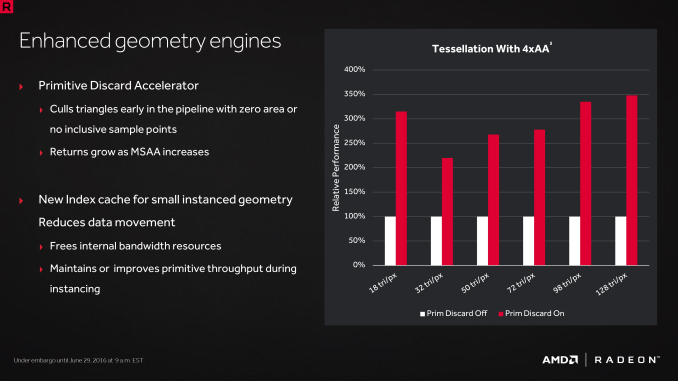

Outside of the shader cores themselves, AMD has also made enhancements to the graphics front-end for Polaris. AMD’s latest architecture integrates what AMD calls a Primative Discard Accelerator. True to its name, the job of the discard accelerator is to remove (cull) triangles that are too small to be used, and to do so early enough in the rendering pipeline that the rest of the GPU is spared from having to deal with these unnecessary triangles. Degenerate triangles are culled before they even hit the vertex shader, while small triangles culled a bit later, after the vertex shader but before they hit the rasterizer. There’s no visual quality impact to this (only triangles that can’t be seen/rendered are culled), and as claimed by AMD, the benefits of the discard accelerator increase with MSAA levels, as MSAA otherwise exacerbates the small triangle problem.

Along these lines, Polaris also implements a new index cache, again meant to improve geometry performance. The index cache is designed specifically to accelerate geometry instancing performance, allowing small instanced geometry to stay close by in the cache, avoiding the power and bandwidth costs of shuffling this data around to other caches and VRAM.

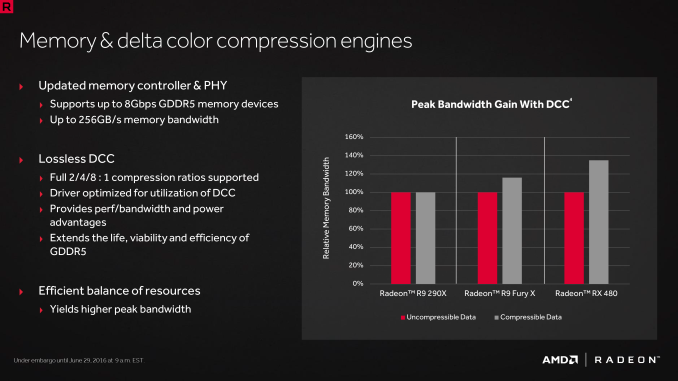

Finally, at the back-end of the GPU, the ROP/L2/Memory controller partitions have also received their own updates. Chief among these is that Polaris implements the next generation of AMD’s delta color compression technology, which uses pattern matching to reduce the size and resulting memory bandwidth needs of frame buffers and render targets. As a result of this compression, color compression results in a de facto increase in available memory bandwidth and decrease in power consumption, at least so long as buffer is compressible. With Polaris, AMD supports a larger pattern library to better compress more buffers more often, improving on GCN 1.2 color compression by around 17%.

Otherwise we’ve already covered the increased L2 cache size, which is now at 2MB. Paired with this is AMD’s latest generation memory controller, which can now officially go to 8Gbps, and even a bit more than that when oveclocking.

449 Comments

View All Comments

cjpp78 - Thursday, July 7, 2016 - link

weird, my reference xfx Rx 480 black edition came stock overclocked to 1328mhz. I've seen others clock reference to 1350+. Its not a good overclocker but it will overclockDemibolt - Friday, July 1, 2016 - link

Not here to argue, just fact checking.Currently, there are brand new GTX 970 (OC versions, not that it matters) available for $240.

hans_ober - Wednesday, June 29, 2016 - link

A bit disappointing, Perf/W is around GTX 980/970 levels = Maxwell. Not near Pascal.For the price? It's a good deal, but Perf/$ isn't as high as it was hyped to be.

PeckingOrder - Wednesday, June 29, 2016 - link

It's a terrible product. Look at the temps.http://media.bestofmicro.com/C/E/591422/original/0...

figus77 - Wednesday, June 29, 2016 - link

No one will buy one with the stock cooler. So, why bother for it?Namisecond - Wednesday, June 29, 2016 - link

I was going to pick one up today (stock model) at my local microcenter, but the temps and noise is making me pause until I get more verification on that...might as well wait for the 1060/ti that might be announced soon...TimAhKin - Wednesday, June 29, 2016 - link

And the card is not meant for 4K. No card stays cool or at decent temps like 60 degrees, in 4K.JoeyJoJo123 - Wednesday, June 29, 2016 - link

My GTX 970 does in 4K on Warframe. I disable AA (not need on 4k 24" screen) and other post-processing and lighting effects which get in the way of identifying enemies and allies quickly and efficiently.Speak for yourself. I'm getting tired of this whole "current gen video cardz can't HANDLE 4k!". At least define 4k as 4K resolution at maxed out settings on current gen AAA game titles, because at that point, you'd be correct. But just saying 4k can't be handled period is completely stupid and false.

eek2121 - Wednesday, June 29, 2016 - link

People do that all the time. When Xbox Scorpio was announced everyone was saying that there was no way it could do 4K, yet few are paying attention to the fact that xbox one games are designed around a slower version of the r7 260x. As an example, there is no doubt in my mind that the RX480 could easily scale these games to 4k.TimAhKin - Wednesday, June 29, 2016 - link

Do you understand my comment?This card(480) is not meant for 4K gaming and that temperature from the above picture is normal. I get 85-90 degrees as well in 4K.