Hands-on & More With Huawei's Mate 10 and Mate 10 Pro: Kirin 970 Meets Artificial Intelligence

by Ian Cutress on October 16, 2017 9:00 AM ESTArtificial Intelligence

For the readers that are not too familiar with the new wave of neural networks and artificial intelligence, there are essentially two main avenues to consider: training, and inference.

Training involves putting the neural network in front of a lot of data, and letting the network improve its decision-making capabilities with a helpful hand now and again. This process is often very computationally expensive, and done in data centers.

Inference is actually using the network to do something once it has been trained. For a network that is trained to recognize pictures of flowers and determine their species, for example, the ‘inference’ part is showing the network a new picture and it calculates what said picture could possibly be. The accuracy of the neural network is its ability to succeed in inference testing, and the typical way of making an inference network better is to train it more.

The mathematics behind training and inference are pretty much identical, but on different scales. There are methods and tricks, such as reducing the precision of the numbers flowing through the calculations, that can make tradeoffs in memory consumption, power, speed and accuracy.

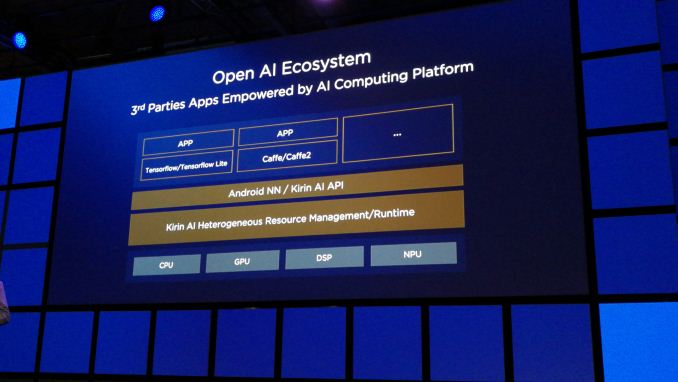

Huawei’s NPU is an engine designed for inference. The idea is that software developers, using either Android’s Neural Network APIs or Huawei’s own Kirin AI APIs, can apply their own pre-trained networks to the NPU and then use it for their software. This is basically the same as how we run video games on a smartphone: the developers use a common API (OpenGL, Vulkan) that leverages the hardware underneath.

The fact that Huawei is stating that the NPU supports Android NN APIs is going to be a plus. If Huawei had locked it down to its own API (which it will likely use for first-party apps), then as many analysts had predicted, it would have died with Huawei. By opening it up to a global platform, we are more likely to see NPU accelerated apps come to the Play Store, possibly even as ubiquitously as video games do now. Much like video games however, we are likely to see different levels of AI performance with different hardware, so some features may require substantial hardware to get on board.

What Huawei will have a problem with regarding the AI feature set is marketing. Saying that the smartphone supports Artificial Intelligence, or that it is an ‘AI-enabled’ smartphone is not going to be the primary reason for buying the device. Most common users will not understand (or care) if a device is AI capable, much in the same way that people barely discuss the CPU or GPU in a smartphone. This is AnandTech, so of course we will discuss it, but the reality is that most buyers do not care.

The only way that Huawei will be able to mass market such a feature is through the different user experiences it enables.

The First AI Applications for the Mate 10

Out of the gate, Huawei is supporting two primary applications that use AI – one of its own, and a major collaboration with Microsoft. I’ll start with the latter, as it is a pretty big deal.

With the Mate 10 and Mate 10 Pro, Huawei has collaborated with Microsoft to enable offline language translation using neural networks. This will be with the Microsoft Translate app, and consists of two main portions: word detection and then the translation itself. Normally both of these features would happen in the cloud and require a data connection, but this is the next evolution of the idea. It also leans on the idea of moving more of the functionality that commonly exists in the cloud (better compute, but ‘higher’ costs and data required) do the device or ‘edge’ (which is power and compute limited, but ‘free’). Now theoretically this could have been done offline many years ago, but Huawei is citing the use of the NPU to allow for it to be done more quickly and with less power consumed.

The tie-in with Microsoft has potential, especially if it works well. I have personally used tools like Google Translate to converse in the past, and it kind of worked. Having something like that which works offline is a plus, the only question would be around how much storage space is required and how accurate it will be. Both questions might be answered during the presentations today announcing the device, although it will be interesting to hear what metrics they will use.

The second application to get the AI treatment is in photography. This uses image and scene detection to apply one of fourteen presets to get ‘the best’ photo. When this feature was originally described, it sounded like that the AI was going to be the ultimate Pro photographer, and change all the pro-mode like settings based on what it thought was right – instead, it distils the scene down to one of fourteen potential scenes and runs a predefined script for the settings to use.

Nominally this isn’t a major ‘wow’ use-case for AI. It becomes a marketable feature for scene detection that, if enabled automatically, could significantly help the quality of auto-mode photography. But that is the feature that which users will experience without knowing AI is behind it: the minute Huawei starts to advertise it with the AI moniker, it is likely to get overcomplicated fast for the general public.

An additional side note. Huawei states that it is using the AI engine for two other parts of the device under the hood. The first is in battery power management, and recognizing which parts of the day typically need more power and responding through the DVFS curve to do so. The idea is that the device can work out what power can be expended and when in order to provide a day of full charge. Personally, I’m not too hopeful about this, given the light-touch explanation, but the results will be interesting to see.

The second under-the-hood addition is in general performance characteristics. In the last generation Huawei promoted that it had tested its hardware and software to provide 18 months of consistent performance. The details were (annoyingly) light but it related to memory fragmentation (shouldn’t be an issue with DRAM), storage fragmentation (shouldn’t be an issue with NAND) and other functionality. When pressing Huawei for more details, none was forthcoming. What the AI hardware inside the chip should do, according to Huawei, is enable the second generation of this feature, leading to performance retention.

Killer Applications for AI, and Application Lag

One of the problems Huawei has is that while these use cases are, in general, good, none of them is a killer application for AI. The translate feature is impressive, however as we move into a better-connected environment, it might be better to offload that sort of compute to servers if it will be more accurate. The problem AI for smartphones has is that it is a new concept: with both Huawei and Apple announcing their dedicated hardware to applying AI neural networks, and Samsung not far behind, there is going to be some form of application lag between implementing the hardware and getting the software right. Ultimately it ends up a big gamble on the state of the semiconductor designers to dedicate so much silicon to it.

When we consider how app developers will approach AI, there are two main directions. Firstly, we start with applications that already exist adding in AI to their software. They have a hammer and are looking for a nail: the first ones out of the gate publicly are likely to be the social media apps, though I would not count out professional apps to be too far behind. The second segment of developers will be those that are creating new apps with the AI requirement – their application would not work otherwise. Part of the issue here is having an application idea that is AI limited in the first place, and then having a system that defaults back down to the GPU (or CPU) if dedicated neural network hardware is not present.

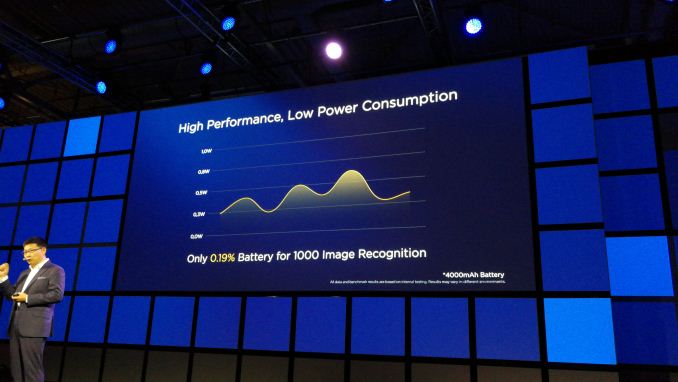

Then comes the performance discussion. Huawei was keen to point out that their solution is capable of running an image recognition network at 2000 images per minute, around double that of the nearest competition. While that is an interesting metric, it ultimately becomes a synthetic – no-one is going to need 2000 images per minute every minute being identified. Perhaps this can extend to video, e.g. real-time processing and image recognition combined with audio transcription for later searching, but the application that does that is not currently on smartphones (or if one exists, is not using the new AI hardware).

One of the questions I put to Huawei and HiSilicon is around performance: if Huawei is advertising up to 2x the raw performance in FLOPS compared to say, Apple, how as a user is that going to affect my day-to-day use with the hardware? How is that extra horsepower going to generate new experiences that the Apple hardware cannot? Not only did Huawei not have a good answer for this, they didn’t have an answer at all. The only answer I can think of that might be appropriate is that the ideas required haven’t been thought of yet. There’s that Henry Ford quote about ‘if you ask the customer, all they want is faster horses’ which means that sometimes a paradigm shift is needed to generate new experiences; a new technology needs its killer application. Then comes the issue about the lag of app development behind these new features.

The second question to Huawei on this was about benchmarking. We already extensively benchmark the CPU and the GPU, and now we are going to have to test the NPU. Currently no real examples exist, and the applications using the AI hardware are not sufficient enough to get an accurate comparison on the hardware available, because the feature either works or it does not. Again, Huawei didn’t have a good answer for this, outside their 2000 images/minute metric. To a certain extent, they don’t need an answer for this right now – the raw appeal of AI dedicated hardware is the fact that it is new. The newness is the wow factor. The analysis of that factor is something that typically occurs in the second generation. I made it quite clear that as technical reviewers, we would be looking to see how we can benchmark the hardware (if not this generation, then perhaps next generation) and I actively encouraged Huawei to synchronize with the common industry standard benchmark software tools in order to do so. Again, Huawei has given itself a step up by supporting Android’s Neural Network APIs, which should open the software up to these developers.

On a final thought, last week at GTC Europe, NVIDIA's keynote mentioned an understated yet interesting feature where using AI could help improve it. Ray tracing, to provide realistic scene interpretation over polygon modeling, is usually a very computationally intensive task, however the benefit is an extreme payoff in visual fidelity. What NVIDIA showed was AI assisted ray-tracing: predicting the colors of nearby pixels based on the information already computed and then updating as more computation was performed. While true ray-tracing for interactive video (and video games) might still be a far-away wish, AI-assisted ray tracing looked like an obvious way to accelerate the problem. Could this be something applied to smartphones? If there is dedicated AI hardware, such as the NPU, it could be a good fit to enable better user experiences.

103 Comments

View All Comments

name99 - Monday, October 16, 2017 - link

Think of AI as a pattern recognition engine. What does imply?Well for one thing, the engine is only going to see patterns in what it is FED! So what is it being fed?

Obvious possibilities are images (and we know how that's working out) and audio (so speech recognition+translation, and again we know how that's working out). A similar obvious possibility could be stylus input and so writing recognition, but no-one seems to care much about that these days.

Now consider the "smart assistant" sort of idea. For that to work, there needs to be a way to stream all the "activities" of the phone, and their associated data, through the NPU in such a way that patterns can be detected. I trust that, at least the programmers reading this, start to see what sort of a challenge that is. What are the relevant data structures to represent this stream of activities? What does it mean to find some pattern/clustering in these activities --- how is that actionable?

Now Apple, for a few years now, has been pushing the idea that every time a program interacts with the user, it wraps up that interaction in a data structure that describes everything that's being done. The initial reason for this was, I think, for the on-phone search engine, but soon the most compelling reason for this (and easiest to understand the idea) was Continuity --- by wrapping up an "activity" in a self-describing data structure, Apple can transmit that data structure from, say, your phone to your mac or your watch, and so continue the activity between devices.

Reason I bring this up is that it obviously provides at least a starting point for Apple to go down this path. But only a starting point. Unlike images, each phone does not generate MILLIONS of these activities, so you have a very limited data set within which to find patterns. Can you get anything useful out of that? Who knows?

Android also has something called Activities, but as far as I can tell they are rather different from the Apple version, and not useful for the sort of issue I described. As far as I know Android has no such equivalent today. Presumably MS Will have to define such an equivalent as part of their copy of (as subset of) Continuity that's coming out with Fall Creators, and perhaps they have the same sort of AI ambitions that they hope to layer upon it?

Valantar - Tuesday, October 17, 2017 - link

The thing is, the implementation here is a "pattern recognition engine" /without any long-term memory/. In other words: it can't learn/adapt/improve over time. As such, it's as dumb as a bag of rocks. I wholeheartedly agree with not caring if the phone can recognize cats/faces/landscapes in my photos (which, besides, a regular non-AI-generated algorithm can do too, although probably not as well). How about learning the user's preferences in terms of aesthetics, subject matter, gallery culling? That would be useful, especially the last one: understanding what the fifteen photos you just took were focusing on, and then picking the best one in terms of focus, sharpness, background separation, colour, composition, and so on. Sure, also a task an algorithm could do (and do, in some apps), but sufficiently complex that it's likely that an AI that learns over time would do a far better job. Not to mention that an adaptive AI in that situation could regularly present the user with prompts like "I selected this as the best shots. These were the runner-ups. Which do you prefer?" which would give valuable feedback to adjust the process.serendip - Tuesday, October 17, 2017 - link

I do plenty of manual editing and cataloging of photos shot on the phone, in a mirror of the processes I use for a DSLR and a laptop. I don't think an AI will know if I want to do a black and white series in Snapseed from color photos, it won't know which ones to keep or delete, and it won't know about the folder organization I use.So what exactly is the AI for?

tuxRoller - Tuesday, October 17, 2017 - link

It COULD improve over time if they pushed out upgraded NN. Off device training with occasional updates to supported devices is going to be the best option for awhile.Krysto - Monday, October 16, 2017 - link

Disappointed they aren't using the AI accelerator for more advanced computational photography, like say better identifying a person's face and body and then doing the boken around that, or improving dynamic range by knowing exactly which parts to expose more, and so on.Auto-switching to a "mode" is really something that other phone makers have had for a few years now in their "Auto" mode.

Ian Cutress - Monday, October 16, 2017 - link

This was one of my comments to Huawei. I was told that the auto modes do sub-frame enhancements for clarity, though I'm under the assumption those are the same as previous tools and algorithms and not AI driven. Part of the issue here is AI can be a hammer, but there needs to be nails.melgross - Monday, October 16, 2017 - link

With the supposed high performance of this neural chip when compared to Apple’s right now, I’m a bit confused.Since we don’t know exactly how this works, and we know even less about Apple’s, how can we even begin to compare performance between them?

Hopefully, you will soon have a real review of the 8/8+. As well as the deep dive of then SoC, something which was promised for last year’s model, but never materialized.

A comparison between these two new SoCs will be interesting.

name99 - Monday, October 16, 2017 - link

Apple gave an "ops per sec" number, Huawei gave a FLOPS number. One was bigger than the other.That's all we have.

There are a million issues with this. Are both talking about 32-bit flops? Or 16-bit? Maybe Apple meant 32-bit FLOPs and Huawei 16-bit?

And is FLOP actually a useful metric? Maybe in real situations these devices are really limited by their cache or memory subsystems?

To be fair, no-one is (yet) making abig deal about this precisely because anyone who know anything understands just how meaningless both the numbers are. It will take a year or three before we have enough experience with what the units do that we CARE about, and so know what to bother benchmarking or how to compare them.

Baidu have, for example, a supposed NPU benchmark suite, but it kinda sucks. All it tests is the speed of some convolutions at a range of different sizes. More problematically, at least as it exists today, it's basically C code. So you can look up the performance number for an iPhone, but it's meaningless because it doesn't even give you the GPU performance, let alone the NPU performance.

We need to learn what sort of low-level performance primitives we care about testing, then we need to write up comparable cross-device code that uses the optimal per-device APIs on each device. This will take time.

melgross - Wednesday, October 18, 2017 - link

This is the problem I’m thinking about. We don’t have enough info to go by.varase - Tuesday, November 21, 2017 - link

I assumed Apple's numbers were something in the inferences/second ballpark as this was a neural processor (and MLKit seems to be processing data produced by standard machine learning models). We know the Apple neural processor is used by Face ID, first as a gatekeeper (detect attempts to fake it out, and then attempt to see if the face in view is the same one store stored in the secure enclave.Flops seems to imply floating point operations/second.

Color me confused.