Intel’s Discrete GPU Era Begins: Intel Launches Iris Xe MAX For Entry-Level Laptops

by Ryan Smith on October 31, 2020 12:01 PM EST

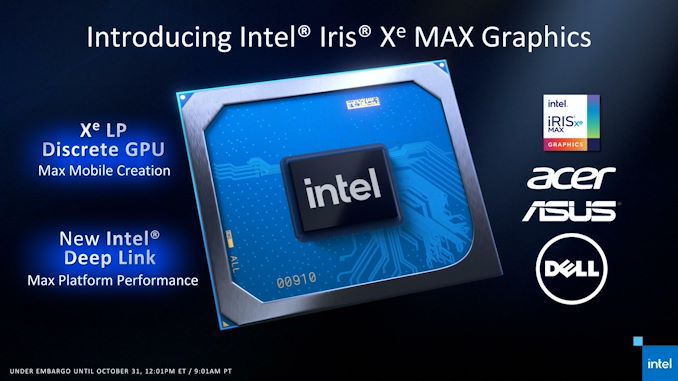

Today may be Halloween, but what Intel is up to is no trick. Almost a year after showing off their alpha silicon, Intel’s first discrete GPU in over two decades has been released and is now shipping in OEM laptops. The first of several planned products using the DG1 GPU, Intel’s initial outing in their new era of discrete graphics is in the laptop space, where today they are launching their Iris Xe MAX graphics solution. Designed to complement Intel’s Xe-LP integrated graphics in their new Tiger Lake CPUs, Xe MAX will be showing up in thin-and-light laptops as an upgraded graphics option, and with a focus on mobile creation.

We’ve been talking about DG1 off and on since CES 2020, where Intel first showed off the chip in laptops and in a stand-alone development card. The company has continuously been coy about the product, but at a high level it’s been clear for some time that this was going to be an entry-level graphics solution suitable for use in smaller laptops. Based heavily on the integrated graphics in Intel’s Tiger Lake-U CPU, the Xe-LP architecture GPU is a decidedly entry-level affair. None the less, it’s an important milestone for Intel: by launching their first DG1-based product, Intel has completed first step in their plans to establish themselves as a major competitor in the discrete GPU space.

Sizing up Intel’s first dGPU in a generation, Intel has certainly made some interesting choices with the chip and what markets to pursue. As previously mentioned, the chip is based heavily on Tiger Lake-U’s iGPU – so much so that it has virtually the same hardware, from EUs to media encoder blocks. As a result, Xe MAX isn’t as much a bigger Xe-LP graphics solution so much as it is an additional discrete version of the Tiger Lake iGPU. Which in turn has significant ramifications in the performance expectations for the chip, and how Intel is going about positioning it.

To cut right to the chase on an important question for our more technical readers, Intel has not developed any kind of multi-GPU rendering technology that allows for multiple GPUs to be used together for a single graphics task (ala NVIDIA’s SLI or AMD’s CrossFire). So there is no way to combine a Tiger Lake-U iGPU with Xe MAX and double your DOTA framerate, for example. Functionally, Xe MAX is closer to a graphics co-processor – literally a second GPU in the system.

As a result, Intel isn’t seriously positioning Xe MAX as a gaming solution – in fact I’m a little hesitant to even attach the word “graphics” to Xe MAX, since Intel’s ideal use cases don’t involve traditional rendering tasks. Instead, intel is primarily pitching Xe MAX as an upgrade option for mobile content creation; an additional processor to help with video encoding and other tasks that leverage GPU-accelerated computing. This would be things like Handbrake, Topaz’s Gigapixel AI image upsampling software, and other such productivity/creation tasks. This is a very different tack than I suspect a lot of people were envisioning, but as we’ll see, it’s the route that makes the most sense for Intel given what Xe MAX can (and can’t) do.

At any rate, as an entry-level solution Xe MAX is being setup to compete with NVIDIA’s last-generation entry-level solution, the MX350. Competing with the MX350 is a decidedly unglamorous task for Intel’s first discrete graphics accelerator, but it’s an accurate reflection of Xe MAX’s performance capabilities as an entry-level part, as well as a ripe target since MX350 is based on last-generation NVIDIA technology. NVIDIA shouldn’t feel too threatened since they also have the more powerful MX450, but Xe MAX has a chance to at least dent NVIDIA’s near-absolute mobile marketshare by going after the very bottom of it. And, looking at the bigger picture here for Intel’s dGPU efforts, Intel needs to walk before they can run.

Finally, as mentioned previously, today is Xe MAX’s official launch. Intel has partnered with Acer, ASUS, and Dell for the first three laptops, most of which were revealed early by their respective manufacturers. These laptops will go on sale this month, and the fact that today’s launch was timed to align with midnight on November 1st in China offers a big hint of what to expect. Intel’s partners will be offering Xe MAX laptops in China and North America, but given China’s traditional status as the larger, more important market for entry-level hardware, don’t be too surprised if that’s where most Xe MAX laptops end up selling, and where Intel puts its significant marketing muscle.

| Intel GPU Specification Comparison | ||||||

| Iris Xe MAX dGPU |

Tiger Lake iGPU |

Ice Lake iGPU |

Kaby Lake iGPU |

|||

| ALUs | 768 (96 EUs) |

768 (96 EUs) |

512 (64 EUs) |

192 (24 EUs) |

||

| Texture Units | 48 | 48 | 32 | 12 | ||

| ROPs | 24 | 24 | 16 | 8 | ||

| Peak Clock | 1650MHz | 1350MHz | 1100MHz | 1150MHz | ||

| Throughput (FP32) | 2.46 TFLOPs | 2.1 TFLOPs | 1.13 TFLOPs | 0.44 TFLOPs | ||

| Geometry Rate (Prim/Clock) |

2 | 2 | 1 | 1 | ||

| Memory Clock | LPDDR4X-4266 | LPDDR4X-4266 | LPDDR4X-3733 | DDR4-2133 | ||

| Memory Bus Width | 128-bit | 128-bit (IMC) |

128-bit (IMC) |

128-bit (IMC) |

||

| VRAM | 4GB | Shared | Shared | Shared | ||

| TDP | ~25W | Shared | Shared | Shared | ||

| Manufacturing Process | Intel 10nm SuperFin | Intel 10nm SuperFin | Intel 10nm | Intel 14nm+ | ||

| Architecture | Xe-LP | Xe-LP | Gen11 | Gen9.5 | ||

| GPU | DG1 | Tiger Lake Integrated |

Ice Lake Integrated | Kaby Lake Integrated | ||

| Launch Date | 11/2020 | 09/2020 | 09/2019 | 01//2017 | ||

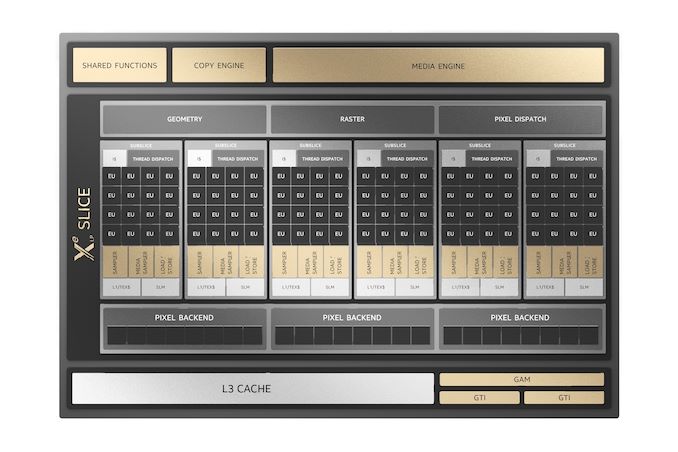

Kicking off the deep dive portion of today’s launch, let’s take a look at the specs for the Xe MAX. As previously mentioned, Xe MAX is derived from Tiger Lake’s iGPU, and this is especially obvious when looking at the GPUs side-by-side. Xe-LP as an architecture was designed to scale up to 96 EUs; Intel put 96 EUs in Tiger Lake, and so a full DG1 GPU (and thus Xe MAX) gets 96 EUs as well.

In fact Xe MAX is pretty much Tiger Lake’s iGPU in almost every way. On top of the identical graphics/compute hardware, the underlying DG1 GPU contains the same two Xe-LP media encode blocks, the same 128-bit memory controller, and the same display controller. Intel didn’t even bother to take out the video decode blocks, so DG1/Xe MAX can do H.264/H.265/AV1 decoding, which admittedly is handy for doing on-chip video transcoding.

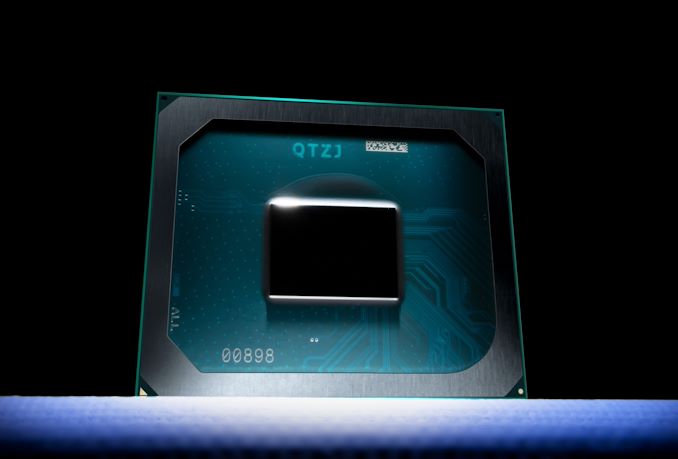

And, to be sure, Intel has confirmed that DG1 is a real, purpose-built discrete GPU. So Xe MAX is not based on salvaged Tiger Lake CPUs or the like; Intel is minting discrete GPUs just for the task. As is usually the case, Intel is not disclosing die sizes or transistor counts for DG1. Our own best guess for the die size is an incredibly rough 72mm2, and this is based on looking at how much of Tiger Lake-U’s 144mm2 die is estimated to occupied by GPU blocks. In reality, this is probably an underestimate, but even so, it’s clear that DG1 is a rather petite GPU, thanks in part to the fact that it’s made on Intel’s 10nm SuperFin process.

Overall, given the hardware similarities, the performance advantage that Xe MAX has over Tiger Lake’s iGPU is that the discrete adapter gets a higher clockspeed. Xe MAX can boost to 1.65GHz, whereas the fastest Tiger Lake-U SKUs can only turbo to 1.35GHz. That means all things held equal, the discrete adapter has a 22% compute and rasterization throughput advantage on paper. But since we’re talking about laptops, TDPs and heat management are going to play a huge role in how things actually work.

Meanwhile the fact that Xe MAX gets Tiger Lake’s memory controller makes for an interesting first for a discrete GPU: this is the first stand-alone GPU with LPDDR4X support. Intel’s partners will be hooking up 4GB of LPDDR4X-4266 to the GPU, which with its 128-bit memory bus will give it a total memory bandwidth of 68GB/sec. Traditionally, entry-level mobile dGPUs use regular DDR or GDDR memory, with the latter offering a lot of bandwidth even on narrow memory buses, but neither being very energy efficient. So it will be interesting to see how Xe MAX’s total memory power consumption compares to the likes of the GDDR5/64-bit MX350.

As an added bonus on the memory front, because this is a discrete GPU, Xe MAX doesn’t have to share its memory bandwidth with other devices. The GPU gets all 68GB/sec to itself, which should improve real-world performance.

And since Xe MAX is a discrete adapter, it also gets its own power budget. The part is nominally 25W, but like TDPs for Intel’s Tiger Lake CPUs, it’s something of an arbitrary value; in reality the chip has as much power and thermal headroom to play with as the OEMs grant it. So an especially thin-and-light device may not have the cooling capacity to support a sustained 25W, and other devices may exceed that during turbo time. Overall we’re not expecting any more clarity here than Intel and its OEMs have offered with Tiger Lake TDPs.

Last but not least, let’s talk about I/O. As an entry-level discrete GPU, Xe MAX connects to its host processor over the PCIe bus; Intel isn’t using any kind of proprietary solution here. The GPU’s PCIe controller is fairly narrow with just a x4 connection, but it supports PCIe 4.0, so on the whole it should have more than enough PCIe bandwidth for its performance level.

Meanwhile the part offers a full display controller block as well, meaning it can drive 4 displays over HDMI 2.0b and DisplayPort 1.4a at up to 8K resolutions. That said, based on Intel’s descriptions it sounds like most (if not all) laptops are going to be taking an Optimus route, and using Tiger Lake’s iGPU to handle driving any displays. So I’m not expecting to see any laptops where Xe MAX’s display outputs are directly wired up.

118 Comments

View All Comments

Spunjji - Sunday, November 1, 2020 - link

I'm not saying they aren't. I was talking about how they keep pitching products that they talk up, launch, then mysteriously go quiet on.Then boom, another launch, quickly talk about this one and forget the last one!

I don't buy this "they don't have experience making a PCIe card" thing. Aside from this not actually being a PCIe card, per se, they do already have lots of experience both with building actual cards and connecting accelerators over PCIe. You just admitted it, and then fumbled on why it's not relevant.

SolarBear28 - Sunday, November 1, 2020 - link

Many of those other technologies at least showed promise in the first generation. This is just a duplicate iGPU that cannot used in tandem with the existing iGPU in 90% of workloads.throAU - Monday, November 2, 2020 - link

Thing is, this isn't their first iteration of a GPU. They've been doing integrated graphics for 2+ decades at this point and they had a crack at discrete cards back in the 90s.It isn't even their first discrete GPU in a while. They literally just chopped the thing out of an integrated part and stuck it on a card. Sorry, but that's garbage. Unless they stick a real GPU memory controller on it with a decent amount of bandwidth and significantly increate the EU count .... it is entirely pointless.

JustMe21 - Saturday, October 31, 2020 - link

This is one of the instances where it demonstrates how Intel can bring itself back up to par with AMD because AMD has had opportunities to do the same thing, but just kept rehashing Vega in their APUs. Now that AMD is on par with Intel in single core IPC, they need to work on the extra features of their CPUs and GPUs.ballsystemlord - Saturday, October 31, 2020 - link

Navi 1 is also based on Vega. Does that make it a "rehash"?JustMe21 - Saturday, October 31, 2020 - link

Well, Cezanne could have been Navi, but it's using Vega yet again. It would have been interesting to see an RDNA2 based APU and discreet RDNA be able to function like a big.LITTLE Navi, especially considering RDNA2 introduced Smart Access Memory.fmcjw - Saturday, October 31, 2020 - link

A good followup discussion would be the state of drivers and software support among the 3 dGPU camps. It seems at the entry level we have hardware parity now.KaarlisK - Sunday, November 1, 2020 - link

Yup. Because Intel has the horrible policy of dropping drivers of current-2 generation from the current branch and moving them to legacy. Broadwell, once touted as "you can finally game on it", is already on bugfix support only. It seems they will even be dropping Skylake, simply because it is too old, even if it is almost the same as the still supported Kaby lake.Spunjji - Sunday, November 1, 2020 - link

Yup, and Intel's drivers are miserable from a gaming perspective. Several of the games they quote frame rates for have known bugs on their hardware.Spunjji - Monday, November 2, 2020 - link

"Cezanne could have been Navi"Could it, though? Maybe RDNA; probably not RDNA 2 based on the time-frames involved, though. Given the performance they get from Vega already - and how absolutely tiny it is - I can see why they didn't go for the interim design that appeared to be fairly inefficient in terms of transistors vs. performance.