Update: PCI Express 6.0 Draft 0.71 Released, Final Release by End of Year

by Ryan Smith on July 2, 2021 7:00 AM EST- Posted in

- CPUs

- Interconnect

- PCIe

- PCI-SIG

- PCIe 6.0

Update 07/02: Albeit a couple of days later than expected, the PCI-SIG has announced this morning that the PCI Express draft 0.71 specification has been released for member review. Following a minimum 30 day review process, the group will be able to publish the draft 0.9 version of the specficiation, putting them on schedule to release the final version of the spec this year.

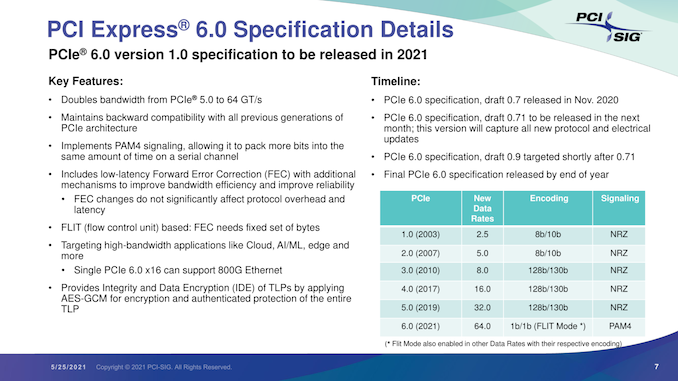

Originally Published 05/25

As part of their yearly developer conference, the PCI Special Interest Group (PCI-SIG) also held their annual press briefing today, offering an update on the state of the organization and its standards. The star of the show, of course, was PCI Express 6.0, the upcoming update to the bus standard that will once again double its data transfer rate. PCI-SIG has been working on PCIe 6.0 for a couple of years now, and in a brief update, confirmed that the group remains on track to release the final version of the specification by the end of this year.

The most recent draft version of the specification, 0.7, was released back in November. Since then, PCI-SIG has remained at work collecting feedback from its members, and is gearing up to release another draft update next month. That draft will incorporate the all of the new protocol and electrical updates that have been approved for the spec since 0.7.

In a bit of a departure from the usual workflow for the group, however, this upcoming draft will be 0.71, meaning that PCIe 6.0 will be remaining at draft 0.7x status for a little while longer. The substance of this decision being that the group is essentially going to hold for another round of review and testing before finally clearing the spec to move on to the next major draft. Overall, the group’s rules call for a 30-day review period for the 0.71 draft, after which the group will be able to release the final draft 0.9 specification.

Ultimately, all of this is to say that PCIe 6.0 remains on track for its previously-scheduled 2021 release. After draft 0.9 lands, there will be a further two-month review for any final issues (primarily legal), and, assuming the standard clears that check, PCI-SIG will be able to issue the final, 1.0 version of the PCIe 6.0 specification.

In the interim, the 0.9 specification is likely to be the most interesting from a technical perspective. Once the updated electrical and protocol specs are approved, the group will be able to give some clearer guidance on the signal integrity requirements for PCIe 6.0. All told we’re not expecting much different from 5.0 (in other words, only a slot or two on most consumer motherboards), but as each successive generation ratchets up the signaling rate, the signal integrity requirements have tightened.

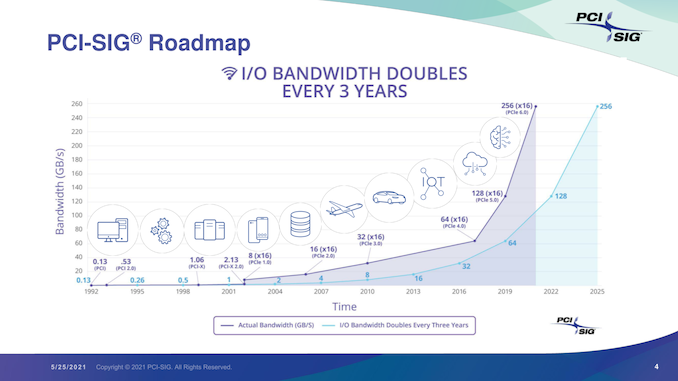

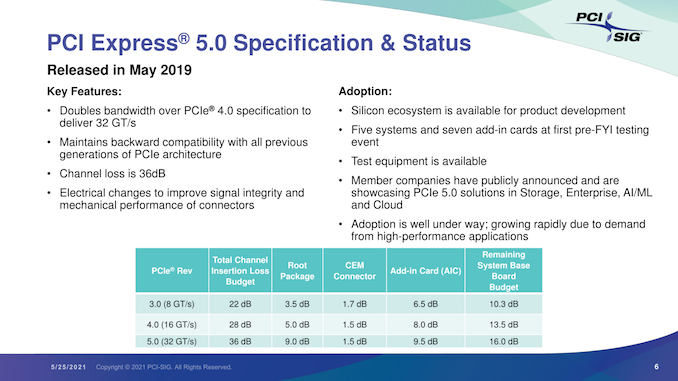

Overall, the unabashedly nerdy standards group is excited about the 6.0 standard, comparing it in significance to the big jump from PCIe 2.0 to PCIe 3.0. Besides proving that they’re once again able to double the bandwidth of the ubiquitous bus, it will mean that they’ve been able to keep to their goal of a three-year cadence. Meanwhile, as the PCIe 6.0 specification reaches completion, we should finally begin seeing the first PCIe 5.0 devices show up in the enterprise market.

103 Comments

View All Comments

TeXWiller - Friday, July 2, 2021 - link

CXL is running on PCIe5, and since almost every GPU is a compute accelerator these days, and even more in the very near future..mode_13h - Sunday, July 4, 2021 - link

> CXL is running on PCIe5It shares the same PHY layer with PCIe 5, making it easy to implement a device which speaks both protocols. It does not mean that CXL support is free or automatic, for PCIe 5.0 devices.

> since almost every GPU is a compute accelerator these days

AMD, Nvidia, and Intel all have dedicated product lines for compute accelerators, now. To strengthen that market segmentation, I think they won't upgrade gaming products to PCIe 5.0 as soon, if ever.

mode_13h - Wednesday, May 26, 2021 - link

> where high speed networking or GPU interconnects are needed.Intel Optane DC P5800X can make good use of PCIe 4.0 x4. Its 512B IOPs are so high that I could believe it would measurably benefit from the lower latency of PCIe 5.

PaulHoule - Friday, July 2, 2021 - link

Intel has been making excuses about why people don't need I/O for a long time. Seems the only things that matter to them are thin, light, and anti-virus.For a long time consumer platforms have been starved for I/O, particularly when you consider that most chips and motherboards have restrictions about how you can use the ports -- restrictions you'll usually learn about when you try to plug in a card and it doesn't work or some of the features of the motherboard (serial ports) quit working.

It's like the bad old days before Plug-N-Play except back then people knew what the rules were, now the rules are "buy an i7 chip, the most expensive motherboard you can find, and pray"

Qasar - Friday, July 2, 2021 - link

and this could be solved as simply as just adding more pcie lanes to the consumer platform, even if it is just on higher end boards. for my own usage, i could use a few more lanes.....mode_13h - Sunday, July 4, 2021 - link

> this could be solved as simply as just adding more pcie lanes to the consumer platformThe socket from Sandybridge and Ivy Bridge supported 20 lanes of PCIe + the 4-lane DMI link. They dropped 4 of those lanes in Haswell, only to bring them back in Comet Lake, but that socket also widened the DMI link to x8, which benefits everything hanging off it.

Then, Rocket Lake boosted everything to PCIe 4.0. So, now the chipset has 16x of the bandwidth it had in Skylake times and you have a dedicated 4.0 x4 link for SSD. And because of their x8 chipset link, they provide even more bandwidth than AMD's desktop platform!

I'm really interested to learn why their mainstream socket jumped all the way to 1700-pins, in Alder Lake.

If you've been feeling I/O-starved, these are indeed good times!

Daeros - Monday, July 5, 2021 - link

RKL can have up to 20 lanes of PCIe 4.0 and has an x8 DMI link at PCIe 3.0 signaling rates (~8GiB/s to the chipset).That ties AM4 for total bandwidth, since it has 24 lanes all running at PCIe x4 signaling rates.

Tams80 - Saturday, July 3, 2021 - link

And?This is establishing a specification. Products still need to designed, validated, certified, and then produced.

jsncrso - Tuesday, May 25, 2021 - link

You are forgetting all of the major speed revisions to the original PCI bus. First was 33MHz at 32 bit, then 66MHz, then finally 133MHz 64 bit by the time PCI 3.0 came along in some server variants. AGP was also a superset of the PCI bus, with changes to reduce latency which is sensitive for GPU operations. Also, VESA local bus was a competitor to PCI that was supposed to replace ISA, but PCI won out. VLB was developed before PCI and has nothing to do with PCI being too slow. The biggest reason we migrated to PCI-E was the transition from parallel to serial in order to prevent data skew.Yojimbo - Tuesday, May 25, 2021 - link

OK, forget about what I said about VESA local bus. But PCI 1.0 was also not created by PCI-SIG. It was created by Intel. I doesn't matter if AGP was a superset of PCI. The work by the PCI-SIG itself was not enough for the demands of common computing devices wishing to use the bus. I'm not forgetting any speed revisions, I'm just going by the graphic that PCI-SIG themselves provided. It also doesn't matter why we migrated to PCI-E. It wasn't developed by the PCI-SIG, it was given to them. And it created a big jump in bandwidth. In fact it created THE big jump in bandwidth that puts them 4 years ahead of the 3 year doubling time in their graph.