Intel Discloses Multi-Generation Xeon Scalable Roadmap: New E-Core Only Xeons in 2024

by Dr. Ian Cutress on February 17, 2022 5:30 PM EST

It’s no secret that Intel’s enterprise processor platform has been stretched in recent generations. Compared to the competition, Intel is chasing its multi-die strategy while relying on a manufacturing platform that hasn’t offered the best in the market. That being said, Intel is quoting more shipments of its latest Xeon products in December than AMD shipped in all of 2021, and the company is launching the next generation Sapphire Rapids Xeon Scalable platform later in 2022. Beyond Sapphire Rapids has been somewhat under the hood, with minor leaks here and there, but today Intel is lifting the lid on that roadmap.

State of Play Today

Currently in the market is Intel’s Ice Lake 3rd Generation Xeon Scalable platform, built on Intel’s 10nm process node with up to 40 Sunny Cove cores. The die is large, around 660 mm2, and in our benchmarks we saw a sizeable generational uplift in performance compared to the 2nd Generation Xeon offering. The response to Ice Lake Xeon has been mixed, given the competition in the market, but Intel has forged ahead by leveraging a more complete platform coupled with FPGAs, memory, storage, networking, and its unique accelerator offerings. Datacenter revenues, depending on the quarter you look at, are either up or down based on how customers are digesting their current processor inventories (as stated by CEO Pat Gelsinger).

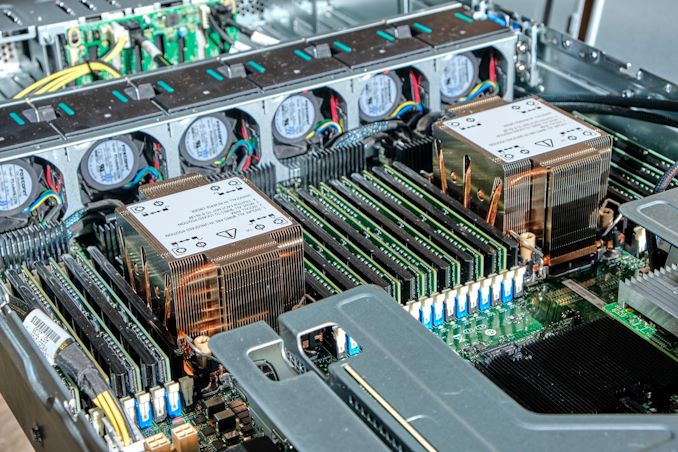

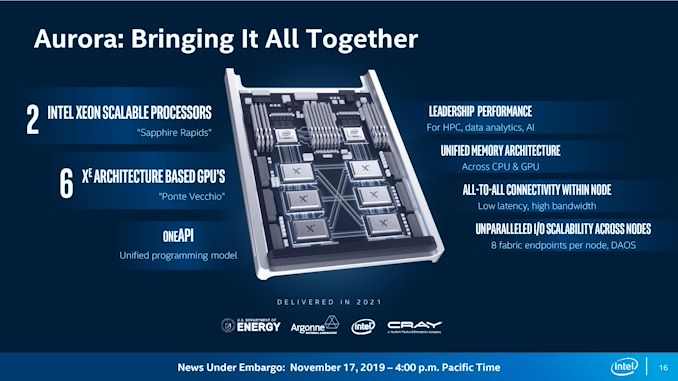

That being said, Intel has put a large amount of effort into discussing its 4th Generation Xeon Scalable platform, Sapphire Rapids. For example, we already know that it will be using >1600 mm2 of silicon for the highest core count solutions, with four tiles connected with Intel’s embedded bridge technology. The chip will have eight 64-bit memory channels of DDR5, support for PCIe 5.0, as well as most of the CXL 1.1 specification. New matrix extensions also come into play, along with data streaming accelerators, quick assist technology, all built on the latest P-core designs currently present in the Alder Lake desktop platform, albeit optimized for datacenter use (which typically means AVX512 support and bigger caches). We already know that versions of Sapphire Rapids will be available with HBM memory, and the first customer for those chips will be the Aurora supercomputer at Argonne National Labs, coupled with the new Ponte Vecchio high-performance compute accelerator.

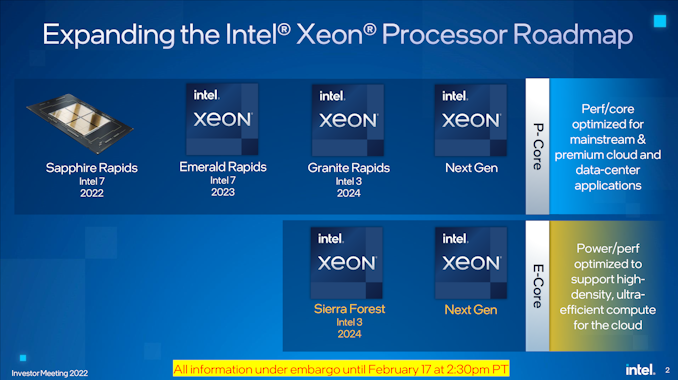

The launch of Sapphire Rapids is significantly later than originally envisioned several years ago, but we expect to see the hardware widely available during 2022, built on Intel 7 process node technology.

Next Generation Xeon Scalable

Looking beyond Sapphire Rapids, Intel is finally putting materials into the public to showcase what is coming up on the roadmap. After Sapphire Rapids, we will have a platform compatible Emerald Rapids Xeon Scalable product, also built on Intel 7, in 2023. Given the naming conventions, Emerald Rapids is likely to be the 5th Generation.

Emerald Rapids (EMR), as with some other platform updates, is expected to capture the low hanging fruit from the Sapphire Rapids design to improve performance, as well as updates from the manufacturing. With platform compatibility, it means Emerald will have the same support when it comes to PCIe lanes, CPU-to-CPU connectivity, DRAM, CXL, and other IO features. We’re likely to see updated accelerators too. Exactly what the silicon will look like however is still an unknown. As we’re still new in Intel’s tiled product portfolio, there’s a good chance it will be similar to Sapphire Rapids, but it could equally be something new, such as what Intel has planned for the generation after.

After Emerald Rapids is where Intel’s roadmap takes on a new highway. We’re going to see a diversification in Intel’s strategy on a number of levels.

Starting at the top is Granite Rapids (GNR), built entirely of Intel’s performance cores, on an Intel 3 process node for launch in 2024. Previously Granite Rapids had been on roadmaps as an Intel 4 node product, however, Intel has stated to us that the progression of the technology as well as the timeline of where it will come into play makes it better to put Granite on that Intel 3 node. Intel 3 is meant to be Intel’s second-generation EUV node after Intel 4, and we expect the design rules to be very similar between the two, so it’s not that much of a jump from one to the other we suspect.

Granite Rapids will be a tiled architecture, just as before, but it will also feature a bifurcated strategy in its tiles: it will have separate IO tiles and separate core tiles, rather than a unified design like Sapphire Rapids. Intel hasn’t disclosed how they will be connected, but the idea here is that the IO tile(s) can contain all the memory channels, PCIe lanes, and other functionality while the core tiles can be focused purely on performance. Yes, it sounds like what Intel’s competition is doing today, but ultimately it’s the right thing to do.

Granite Rapids will share a platform with Intel’s new product line, which starts with Sierra Forest (SRF) which is also on Intel 3. This new product line will be built from datacenter optimized E-cores, which we’re familiar with from Intel’s current Alder Lake consumer portfolio. The E-cores in Sierra Forest will be a future generation than the Gracemont E-cores we have today, but the idea here is to provide a product that focuses more on core density rather than outright core performance. This allows them to run at lower voltages and parallelize, assuming the memory bandwidth and interconnect can keep up.

Sierra Forest will be using the same IO die as Granite Rapids. The two will share a platform – we assume in this instance this means they will be socket compatible – so we expect to see the same DDR and PCIe configurations for both. If Intel’s numbering scheme continues, GNR and SRF will be Xeon Scalable 6th Generation products. Intel stated to us in our briefing that the product portfolio currently offered by Ice Lake Xeon products will be covered and extended by a mix of GNR and SRF Xeons based on customer requirements. Both GNR and SRF are expected to have full global availability when launched.

The E-core Sierra Forest focused on core density will end up being compared to AMD’s equivalent, which for Zen4c will be called Bergamo – AMD might have a Zen5 equivalent when SRF comes to market.

I asked Intel whether the move to GNR+SRF on one unified platform means the generation after will be a unique platform, or whether it will retain the two-generation retention that customers like. I was told that it would be ideal to maintain platform compatibility across the generations, although as these are planned out, it depends on timing and where new technologies need to be integrated. The earliest industry estimates (beyond CPU) for PCIe 6.0 are in the 2026 timeframe, and DDR6 is more like 2029, so unless there are more memory channels to add it’s likely we’re going to see parity between 6th and 7th Gen Xeon.

My other question to Intel was about Hybrid CPU designs – if Intel was now going to make P-core tiles and E-core tiles, what’s stopping a combined product with both? Intel stated that their customers prefer uni-core designs in this market as the needs from customer to customer differ. If one customer prefers an 80/20 split on P-cores to E-cores, there’s another customer that prefers a 20/80 split. Having a wide array of products for each different ratio doesn’t make sense, and customers already investigating this are finding out that the software works better with a homogeneous arrangement, instead split at the system level, rather than the socket level. So we’re not likely to see hybrid Xeons any time soon. (Ian: Which is a good thing.)

I did ask about the unified IO die - giving the same P-core only and E-core only Xeons the same number of memory channels and I/O lanes might not be optimal for either scenario. Intel didn’t really have a good answer here, aside from the fact that building them both into the same platform helped customers synergize non-returnable development costs across both CPUs, regardless of the one they used. I didn’t ask at the time, but we could see the door open to more Xeon-D-like scenarios with different IO configurations for smaller deployments, but we’re talking products that are 2-3+ years away at this point.

| Xeon Scalable Generations | ||||||

| Date | AnandTech | Codename | Abbr. | Max Cores |

Node | Socket |

| Q3 2017 | 1st | Skylake | SKL | 28 | 14nm | LGA 3647 |

| Q2 2019 | 2nd | Cascade Lake | CXL | 28 | 14nm | LGA 3647 |

| Q2 2020 | 3rd | Cooper Lake | CPL | 28 | 14nm | LGA 4189 |

| Q2 2021 | Ice Lake | ICL | 40 | 10nm | LGA 4189 | |

| 2022 | 4th | Sapphire Rapids | SPR | * | Intel 7 | LGA 4677 |

| 2023 | 5th | Emerald Rapids | EMR | ? | Intel 7 | ** |

| 2024 | 6th | Granite Rapids | GNR | ? | Intel 3 | ? |

| Sierra Forest | SRF | ? | Intel 3 | |||

| >2024 | 7th | Next-Gen P | ? | ? | ? | ? |

| Next-Gen E | ||||||

| * Estimate is 56 cores ** Estimate is LGA4677 |

||||||

For both Granite Rapids and Sierra Forest, Intel is already working with key ‘definition customers’ for microarchitecture and platform development, testing, and deployment. More details to come, especially as we move through Sapphire and Emerald Rapids during this year and next.

144 Comments

View All Comments

Mike Bruzzone - Sunday, February 20, 2022 - link

whatthe123, Clarification I noted in q3 and q4 2021 'Milan only' volume but that is not correct on AMD losing its 7nm cost advantage to iSF10/7 on TSMC markup adding to AMD cost on TSMC foundry price to AMD. I said up comment string and here Genoa has been shiping in risk volume q3 and q4 to sustain AMD gross margin on customer price incorporating the TSMC mark up. On Rome risk production volume q3 into q4 2019, Genoa has likely shipped minimally 300 K to date up to 447,986 units. I also note Sapphire Rapids shipping in risk volumes in the same time period because at xx% whole Intel will not wait when commercial customers can program the device in this AMD competitive situation. mbHifihedgehog - Monday, February 21, 2022 - link

hhschujj07 - Saturday, February 19, 2022 - link

I personally want Ice Lake. The data center I run does some small cloud hosting specifically for SAP & SAP HANA. Right now Ice Lake isn't certified to run production SAP HANA on VMware. Being able to use Ice Lake instead of any previous Xeon Scalable means you don't need L CPUs to run huge amounts of RAM. Also means I can use a 2 socket instead of a 4 socket server which is cheaper to purchase.Mike Bruzzone - Sunday, February 20, 2022 - link

schujj07, well, Ice Lake is certainly on clearance sale. Are flash arrays still used for data bases or do you need DRAM on the CPU system bus? L CLr is on channel sale and in higher availability than Ice. What do you know of Barlow Pass? Does Optane work for structured data or transaction processing? mbschujj07 - Sunday, February 20, 2022 - link

People still use all flash SANs for DBs. In fact a lot of major SAN vendors are going oynall flash on their high-end. You get much better storage density, lower power consumption, and massively higher iops with the flash. That doesn't even count the higher reliability of flash to spinning disk.SAP HANA is an in RAM DB. Ice Lake is certified for production HANA physical appliances but not for VMware. HANA has a very specific way in which it is covered for PRD. Say your DB is 900GB, you need 900GB RAM just got that VM. Without the L series CPUs you cannot get that much RAM on a single socket for non Ice Lake Xeons. That means you are required to have dual sockets. However, that one VM gets every ounce of RAM and CPU from both sockets by SAP requirements. Your 900GB DB now gets 1.5TB RAM. With Ice Lake I can do that "cheaply" on a single socket with 1TB RAM and then have a DEV or QAS DB running on the other socket. This is one reason we want to have Epyc eventually get PRD certified.

Optane is supported, only in App Direct mode, for PRD and does help a lot on restarting the massive DB. However, only Optane P100 is supported and only on Cascade Lake CPUs. Again this is all in a VMware environment but if you are a cloud provider that is what you are going to use. Also if you run on prem there still isn't any reason to not be virtual just for ease of migration and restart on host failure. https://wiki.scn.sap.com/wiki/plugins/servlet/mobi...

The other pain with HANA are storage requirements. It is hard to find a hyper-converged storage that is certified for PRD. Most certified storage are physical SANs with FC connections. I would love to run it on something like VMware vSAN instead. The more local access of vSAN vs traditional SAN makes latency lower. I can also get higher iops and use any disk I want. For example, an HP SAN won't work with any non HP branded disk (vendor locking). Those disks are then sold at a massive markup. 960GB 1DWPD SAS SSD refurbished run $1k/disk with new being like $1500/disk. Getting that same size and endurance from a place like CDW brings the cost down to under $500/drive for SAS or under $300/drive for NVMe (Micron 7300 pro for example). While my license cost is higher for vSAN, I can load up my host with all NVMe storage and use Optane SSD for my write cache (I actually have an array like this right now). Running that on 25GbE gives awesome performance that is easily scalable. I can easily add more disk and if need by add faster NICs to handle more data.

Mike Bruzzone - Sunday, February 20, 2022 - link

schujj07, thank you for a thorough and detailed assessment of your SAP HANA platform and requirement, wants and benefits, subsystem pros and cons, price differences / tradeoffs, very interesting. mbHifihedgehog - Monday, February 21, 2022 - link

hhmode_13h - Monday, February 21, 2022 - link

My own take on Ice Lake SP is that it's not a bad CPU, just badly-timed. It delivers needed platform enhancements, AVX-512 improvements, better IPC, and an aggregate increase in throughput vs. Cascade Lake (thanks to higher core-counts).That's not to say the lower peak clock speed and power-efficiency aren't areas of disappointment. However, other than some single-thread scenarios, I'm not aware of anything about Ice Lake that's actually *worse* than Cascade Lake.

Spunjji - Tuesday, February 22, 2022 - link

My general understanding is it was too little too late to have broad market appeal. Were it not for AMD's inability to deliver greater volume combined with Intel's flexibility to discount their products as much as needed to encourage purchases, it probably would have hurt Intel quite badly.mode_13h - Wednesday, February 23, 2022 - link

> it was too little too late to have broad market appeal.That's basically what I was trying to say. If it had come out when originally planned, it would've been seen in a rather different light. Especially if the 10 nm+ node on which its made had performed better.