Intel Discloses Multi-Generation Xeon Scalable Roadmap: New E-Core Only Xeons in 2024

by Dr. Ian Cutress on February 17, 2022 5:30 PM EST

It’s no secret that Intel’s enterprise processor platform has been stretched in recent generations. Compared to the competition, Intel is chasing its multi-die strategy while relying on a manufacturing platform that hasn’t offered the best in the market. That being said, Intel is quoting more shipments of its latest Xeon products in December than AMD shipped in all of 2021, and the company is launching the next generation Sapphire Rapids Xeon Scalable platform later in 2022. Beyond Sapphire Rapids has been somewhat under the hood, with minor leaks here and there, but today Intel is lifting the lid on that roadmap.

State of Play Today

Currently in the market is Intel’s Ice Lake 3rd Generation Xeon Scalable platform, built on Intel’s 10nm process node with up to 40 Sunny Cove cores. The die is large, around 660 mm2, and in our benchmarks we saw a sizeable generational uplift in performance compared to the 2nd Generation Xeon offering. The response to Ice Lake Xeon has been mixed, given the competition in the market, but Intel has forged ahead by leveraging a more complete platform coupled with FPGAs, memory, storage, networking, and its unique accelerator offerings. Datacenter revenues, depending on the quarter you look at, are either up or down based on how customers are digesting their current processor inventories (as stated by CEO Pat Gelsinger).

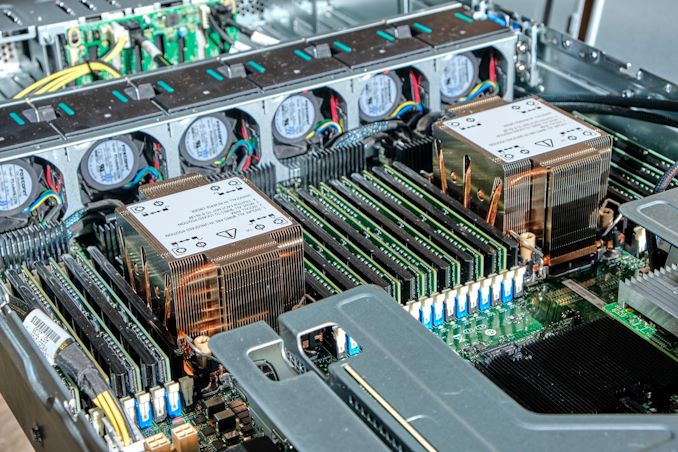

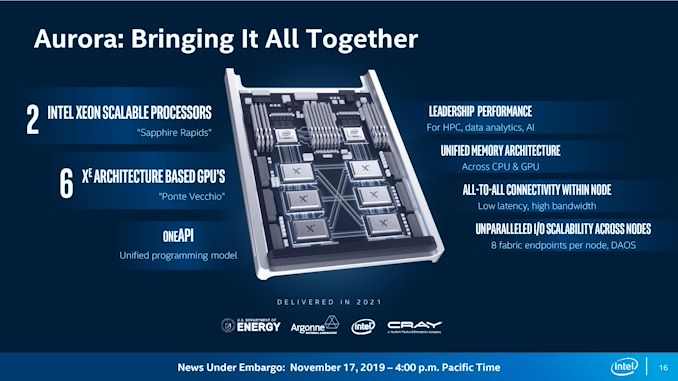

That being said, Intel has put a large amount of effort into discussing its 4th Generation Xeon Scalable platform, Sapphire Rapids. For example, we already know that it will be using >1600 mm2 of silicon for the highest core count solutions, with four tiles connected with Intel’s embedded bridge technology. The chip will have eight 64-bit memory channels of DDR5, support for PCIe 5.0, as well as most of the CXL 1.1 specification. New matrix extensions also come into play, along with data streaming accelerators, quick assist technology, all built on the latest P-core designs currently present in the Alder Lake desktop platform, albeit optimized for datacenter use (which typically means AVX512 support and bigger caches). We already know that versions of Sapphire Rapids will be available with HBM memory, and the first customer for those chips will be the Aurora supercomputer at Argonne National Labs, coupled with the new Ponte Vecchio high-performance compute accelerator.

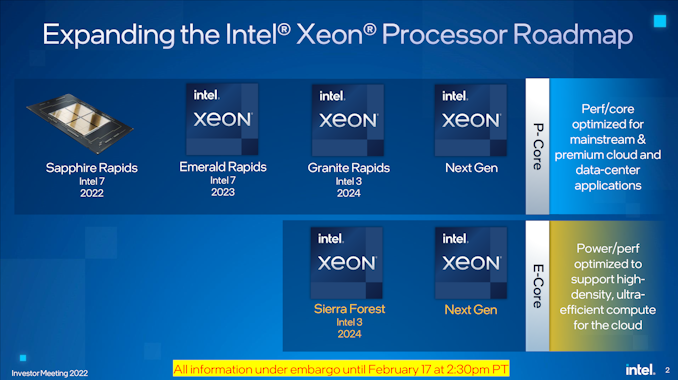

The launch of Sapphire Rapids is significantly later than originally envisioned several years ago, but we expect to see the hardware widely available during 2022, built on Intel 7 process node technology.

Next Generation Xeon Scalable

Looking beyond Sapphire Rapids, Intel is finally putting materials into the public to showcase what is coming up on the roadmap. After Sapphire Rapids, we will have a platform compatible Emerald Rapids Xeon Scalable product, also built on Intel 7, in 2023. Given the naming conventions, Emerald Rapids is likely to be the 5th Generation.

Emerald Rapids (EMR), as with some other platform updates, is expected to capture the low hanging fruit from the Sapphire Rapids design to improve performance, as well as updates from the manufacturing. With platform compatibility, it means Emerald will have the same support when it comes to PCIe lanes, CPU-to-CPU connectivity, DRAM, CXL, and other IO features. We’re likely to see updated accelerators too. Exactly what the silicon will look like however is still an unknown. As we’re still new in Intel’s tiled product portfolio, there’s a good chance it will be similar to Sapphire Rapids, but it could equally be something new, such as what Intel has planned for the generation after.

After Emerald Rapids is where Intel’s roadmap takes on a new highway. We’re going to see a diversification in Intel’s strategy on a number of levels.

Starting at the top is Granite Rapids (GNR), built entirely of Intel’s performance cores, on an Intel 3 process node for launch in 2024. Previously Granite Rapids had been on roadmaps as an Intel 4 node product, however, Intel has stated to us that the progression of the technology as well as the timeline of where it will come into play makes it better to put Granite on that Intel 3 node. Intel 3 is meant to be Intel’s second-generation EUV node after Intel 4, and we expect the design rules to be very similar between the two, so it’s not that much of a jump from one to the other we suspect.

Granite Rapids will be a tiled architecture, just as before, but it will also feature a bifurcated strategy in its tiles: it will have separate IO tiles and separate core tiles, rather than a unified design like Sapphire Rapids. Intel hasn’t disclosed how they will be connected, but the idea here is that the IO tile(s) can contain all the memory channels, PCIe lanes, and other functionality while the core tiles can be focused purely on performance. Yes, it sounds like what Intel’s competition is doing today, but ultimately it’s the right thing to do.

Granite Rapids will share a platform with Intel’s new product line, which starts with Sierra Forest (SRF) which is also on Intel 3. This new product line will be built from datacenter optimized E-cores, which we’re familiar with from Intel’s current Alder Lake consumer portfolio. The E-cores in Sierra Forest will be a future generation than the Gracemont E-cores we have today, but the idea here is to provide a product that focuses more on core density rather than outright core performance. This allows them to run at lower voltages and parallelize, assuming the memory bandwidth and interconnect can keep up.

Sierra Forest will be using the same IO die as Granite Rapids. The two will share a platform – we assume in this instance this means they will be socket compatible – so we expect to see the same DDR and PCIe configurations for both. If Intel’s numbering scheme continues, GNR and SRF will be Xeon Scalable 6th Generation products. Intel stated to us in our briefing that the product portfolio currently offered by Ice Lake Xeon products will be covered and extended by a mix of GNR and SRF Xeons based on customer requirements. Both GNR and SRF are expected to have full global availability when launched.

The E-core Sierra Forest focused on core density will end up being compared to AMD’s equivalent, which for Zen4c will be called Bergamo – AMD might have a Zen5 equivalent when SRF comes to market.

I asked Intel whether the move to GNR+SRF on one unified platform means the generation after will be a unique platform, or whether it will retain the two-generation retention that customers like. I was told that it would be ideal to maintain platform compatibility across the generations, although as these are planned out, it depends on timing and where new technologies need to be integrated. The earliest industry estimates (beyond CPU) for PCIe 6.0 are in the 2026 timeframe, and DDR6 is more like 2029, so unless there are more memory channels to add it’s likely we’re going to see parity between 6th and 7th Gen Xeon.

My other question to Intel was about Hybrid CPU designs – if Intel was now going to make P-core tiles and E-core tiles, what’s stopping a combined product with both? Intel stated that their customers prefer uni-core designs in this market as the needs from customer to customer differ. If one customer prefers an 80/20 split on P-cores to E-cores, there’s another customer that prefers a 20/80 split. Having a wide array of products for each different ratio doesn’t make sense, and customers already investigating this are finding out that the software works better with a homogeneous arrangement, instead split at the system level, rather than the socket level. So we’re not likely to see hybrid Xeons any time soon. (Ian: Which is a good thing.)

I did ask about the unified IO die - giving the same P-core only and E-core only Xeons the same number of memory channels and I/O lanes might not be optimal for either scenario. Intel didn’t really have a good answer here, aside from the fact that building them both into the same platform helped customers synergize non-returnable development costs across both CPUs, regardless of the one they used. I didn’t ask at the time, but we could see the door open to more Xeon-D-like scenarios with different IO configurations for smaller deployments, but we’re talking products that are 2-3+ years away at this point.

| Xeon Scalable Generations | ||||||

| Date | AnandTech | Codename | Abbr. | Max Cores |

Node | Socket |

| Q3 2017 | 1st | Skylake | SKL | 28 | 14nm | LGA 3647 |

| Q2 2019 | 2nd | Cascade Lake | CXL | 28 | 14nm | LGA 3647 |

| Q2 2020 | 3rd | Cooper Lake | CPL | 28 | 14nm | LGA 4189 |

| Q2 2021 | Ice Lake | ICL | 40 | 10nm | LGA 4189 | |

| 2022 | 4th | Sapphire Rapids | SPR | * | Intel 7 | LGA 4677 |

| 2023 | 5th | Emerald Rapids | EMR | ? | Intel 7 | ** |

| 2024 | 6th | Granite Rapids | GNR | ? | Intel 3 | ? |

| Sierra Forest | SRF | ? | Intel 3 | |||

| >2024 | 7th | Next-Gen P | ? | ? | ? | ? |

| Next-Gen E | ||||||

| * Estimate is 56 cores ** Estimate is LGA4677 |

||||||

For both Granite Rapids and Sierra Forest, Intel is already working with key ‘definition customers’ for microarchitecture and platform development, testing, and deployment. More details to come, especially as we move through Sapphire and Emerald Rapids during this year and next.

144 Comments

View All Comments

mode_13h - Monday, February 21, 2022 - link

Aside from DSA and AMX, I think the answer is their next Epyc.How much DSA and AMX are really worth is yet to be determined. I'd imagine Epyc's additional cores can more than make up for the lack of DSA. As for AMX, I expect it'll offer unmatched inferencing performance *for a CPU*, but the world seems to be moving beyond CPUs for that sort of thing. Time will tell.

mode_13h - Tuesday, February 22, 2022 - link

DSA, for any who don't know, refers to the Data Streaming Accelerator engine, in Sapphire Rapids. It's a "high-performance data copy and transformation accelerator". There's some info on it, here:https://01.org/blogs/2019/introducing-intel-data-s...

The thing to keep in mind is that anything one of these engines does could also be performed by a CPU thread. Obviously, DSA is much smaller (and more limited) than a CPU core, but if you've got more cores (i.e. Epyc's 64 cores vs. SPR's 56 cores), then that's more threads (16, in this case) you could spend on async data movement, if necessary.

So, while DSA might be a win in terms of performance per mm^2 of silicon, I don't see it as a huge differentiator or net advantage for SPR.

Spunjji - Tuesday, February 22, 2022 - link

As always with the Intel pushers, they're not interested in the utility of Intel's unique features so much as them not being things that AMD have. They never talk about the unique features that AMD have / have had that have similar niche appeal, for example the VM security features AMD built into Epyc.mode_13h - Wednesday, February 23, 2022 - link

FWIW, I try to stay non-partisan. My goal is to try and present a realistic interpretation of the facts, as I understand them. Regardless of whether it's a key differentiator, DSA is undeniably new and interesting.Same for AMX, I might add (i.e. new and interesting) - can't wait to see its real-world performance!

Spunjji - Tuesday, February 22, 2022 - link

Blah.kgardas - Friday, February 18, 2022 - link

There are rumors spreading that even SR will provide some of its features like avx512, amx, hbme etc. as a software defined (purchased) feature. Would be glad if this would be mentioned here -- if it's already settled or not.mode_13h - Monday, February 21, 2022 - link

There's not much to write about it, until Intel announces something. All we know is that they're laying the groundwork for hardware feature licensing.zodiacfml - Friday, February 18, 2022 - link

Finally, Intel Atom's aspirations coming to fruition. Back then, if i remember correctly, it was meant to provide a staggering amount of cores and as a low power CPU for IoT/cheap devices. But no, Intel left Atom 1-2 Generations behind Core for years until recentlyMike Bruzzone - Friday, February 18, 2022 - link

Adding some analytical data and notations to Dr. Ian’s commentary, think of it as a side bar;Dr. Ian; “Intel is quoting more shipments of its latest Xeon products in December than AMD shipped in all of 2021, and the company is launching the next generation Sapphire Rapids Xeon Scalable platform later in 2022”

Camp Marketing; on INTC 10 Q/K on channel product category and price data Intel sold 10,216,112 Xeon in q3 and 10,105,561 in q4. Intel quarterly shipments are down from 40 M units per quarter at Skylake/Cascade Lake peak supply.

Dr Ian, always tactful, “response to Ice Lake Xeon has been mixed”

Camp Marketing: On CEO Gelsigner and DCG GM Revera 1 M unit Ice Lake sold in q4, this analyst has calculated approximately 1.3 M full run to date and if 2 M that’s no more than Pentium Pro P6 large cache server volume 1997-97. Xeon Ice is a late market run end dud ala Dempsey between Netburst and Core. Ice suffers the same offered between Cascade Lakes and Sapphire Rapids. Customers want Sapphire not Ice.

This analyst has Sapphire Rapids shipping since q3 parallel Genoa 5 nm, both in customer direct risk production volume and the reason is AMD lost its 7 nm area for performance cost advantage on TSMC markup in q3. Subsequently, AMD had to start shipping Genoa to make their margin incorporating TSMC markup. AMD essentially charges at price premium on / above foundry mark up and needs to stay 1.5 nodes ahead to make up the difference in price insuring AMD gross margin objective.

In the commercial space, unlike Arc consumer GPU where Intel risks being mauled by enthusiasts shipping a less then whole product, in the commercial space Intel Xeon at xx% whole ships so long as customers can program the device. In this competitive situation where Genoa is shipping, Intel does not wait.

Dr. Ian: [Intel] digesting their current processor inventories (as stated by CEO Pat Gelsinger).

Camp Marketing; “Digesting” a clever term referring to the Intel Skyake / Cascade Lakes monopoly surplus overhang sitting in use and in secondary channels for resale, 400 M units worth were sold. This can be a good thing on refurbishing the installed base to dGPU compute if DDR 4 is not system bus limited for sGPU compute on cache starved XSL/XCL control plane processing. The channel and installed base definitively want to keep these servers financially production and acceleration is the key.

Pursuant “digesting” Intel dumped on AMD in q3 and q4 back generation Core and Cascade Lakes driving consumer components margin take on OEM price making in relation the Intel offer to variable cost for Intel in q3 and q4 and specific to AMD in q4. In q4 Xeon and Epyc are the only production categories that paid ahead earning Intel $723 and AMD $733 net per unit. Core and Ryzen/Radeon as I described q4 and Intel Core in q3 and q4 delivered net push against variable cost.

Dr Ian: referring to Sapphire Rapids, “we already know that it will be using >1600 mm2”

Camp Marketing Notation; 350 to 400 mm2 is very much in the traditional Intel sweet spot for LCC manufacturability

Dr. Ian speculating resurgence in Xeon D application specific varients?

Xeon D is a dud. No generation was supplied beyond slim volume and many sold off into NAS appliances. Difficult to say what Intel can do here [on over] segmentation [?] where past 'D' attempts were essentially rejected by the customer base.

Mike Bruzzone, Camp Marketing

Mike Bruzzone - Friday, February 18, 2022 - link

Adding for clarity, AMD sold 2,999,867 Milan in q4 2021. mb