The Bulldozer Review: AMD FX-8150 Tested

by Anand Lal Shimpi on October 12, 2011 1:27 AM ESTThe Architecture

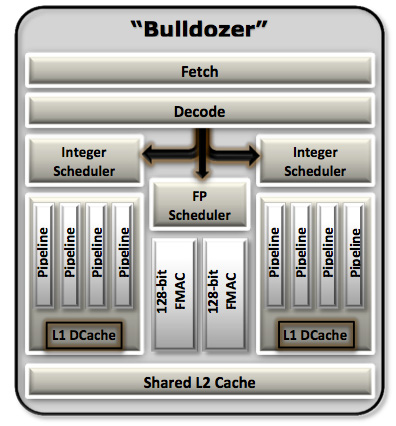

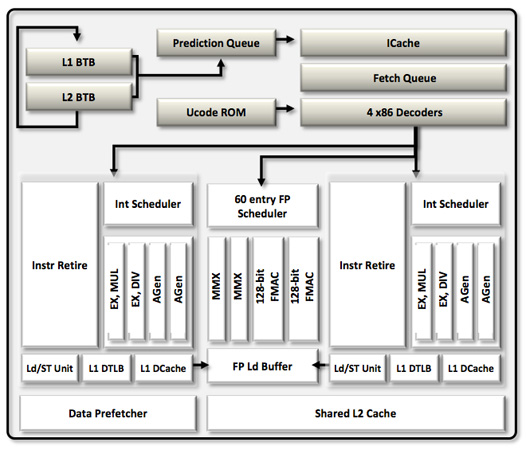

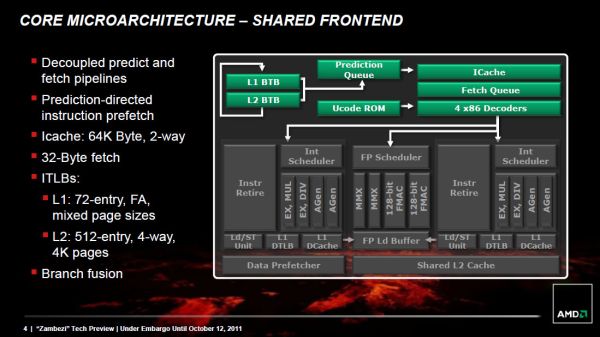

We'll start, logically, at the front end of a Bulldozer module. The fetch and decode logic in each module is shared by both integer cores. The role this logic plays is to fetch the next instruction in the thread being executed, decode the x86 instruction into AMD's own internal format, and pass the decoded instruction onto the scheduling hardware for execution.

AMD widened the K8 front end with Bulldozer. Each module is now able to fetch and decode up to four x86 instructions from a single thread in parallel. Each of the four decoders are equally capable. Remembering that each Bulldozer module appears as two cores, the front end can only pick 4 instructions to fetch and decode from a single thread at a time. A single Bulldozer module can switch between threads as often as every clock.

Decode hardware isn't very expensive on its own, but duplicating it four times across multiple cores quickly adds up. Although decode width has increased for a single core, multi-core Bulldozer configurations can actually be at a disadvantage compared to previous AMD architectures. Let's look at the table below to understand why:

| Front End Comparison | |||||

| AMD Phenom II | AMD FX | Intel Core i7 | |||

| Instruction Decode Width | 3-wide | 4-wide | 4-wide | ||

| Single Core Peak Decode Rate | 3 instructions | 4 instructions | 4 instructions | ||

| Dual Core Peak Decode Rate | 6 instructions | 4 instructions | 8 instructions | ||

| Quad Core Peak Decode Rate | 12 instructions | 8 instructions | 16 instructions | ||

| Six/Eight Core Peak Decode Rate | 18 instructions (6C) | 16 instructions | 24 instructions (6C) | ||

For a single instruction thread, Bulldozer offers more front end bandwidth than its predecessor. The front end is wider and just as capable so this makes sense. But note what happens when we scale up core count.

Since fetch and decode hardware is shared per module, and AMD counts each module as two cores, given an equivalent number of cores the old Phenom II actually offers a higher peak instruction fetch/decode rate than the FX. The theory is obviously that the situations where you're fetch/decode bound are infrequent enough to justify the sharing of hardware. AMD is correct for the most part. Many instructions can take multiple cycles to decode, and by switching between threads each cycle the pipelined front end hardware can be more efficiently utilized. It's only in unusually bursty situations where the front end can become a limit.

Compared to Intel's Core architecture however, AMD is at a disadvantage here. In the high-end offerings where Intel enables Hyper Threading, AMD has zero advantage as Intel can weave in instructions from two threads every clock. It's compared to the non-HT enabled Core CPUs that the advantage isn't so clear. Intel maintains a higher instantaneous decode bandwidth per clock, however overall decoder utilization could go down as a result of only being able to fill each fetch queue from a single thread.

After the decoders AMD enables certain operations to be fused together and treated as a single operation down the rest of the pipeline. This is similar to what Intel calls micro-ops fusion, a technology first introduced in its Banias CPU in 2003. Compare + branch, test + branch and some other operations can be fused together after decode in Bulldozer—effectively widening the execution back end of the CPU. This wasn't previously possible in Phenom II and obviously helps increase IPC.

A Decoupled Branch Predictor

AMD didn't disclose too much about the configuration of the branch predictor hardware in Bulldozer, but it is quick to point out one significant improvement: the branch predictor is now significantly decoupled from the processor's front end.

The role of the branch predictor is to intercept branch instructions and predict their target address, rather than allowing for tons of cycles to go by until the branch target is known for sure. Branches are predicted based on historical data. The more data you have, and the better your branch predictors are tuned to your workload, the more accurate your predictions can be. Accurate branch prediction is particularly important in architectures with deep pipelines as a mispredict causes more instructions to be flushed out of the pipe. Bulldozer introduces a significantly deeper pipeline than its predecessor (more on this later), and thus branch prediction improvements are necessary.

In both Phenom II and Bulldozer, branches are predicted in the front end of the pipe alongside the fetch hardware. In Phenom II however, any stall in the fetch pipeline (e.g. fetching an instruction that wasn't in cache) would stop the whole pipeline including future branch predictions. Bulldozer decouples the branch prediction hardware from the fetch pipeline by way of a prediction queue. If there's a stall in the fetch pipeline, Bulldozer's branch prediction hardware is allowed to run ahead and continue making future predictions until the prediction queue is full.

We'll get to the effectiveness of this approach shortly.

Scheduling and Execution Improvements

As with Sandy Bridge, AMD migrated to a physical register file architecture with Bulldozer. Data is now only stored in one location (the physical register file) and is tracked via pointers back to the PRF as operations make their way through the execution engine. This is a move to save power as copying data around a chip is hardly power efficient.

The buffers and queues that feed into the execution engines of the chip are all larger on Bulldozer than they were on Phenom II. Larger data structures allows for better instruction level parallelism when trying to execute operations out of order. In other words, the issue hardware in Bulldozer is beefier than its predecessor.

Unfortunately where AMD took one step forward in issue hardware, it does a bit of a shuffle when it comes to execution resources themselves. Let's start with the positive: Bulldozer's integer execution cores.

Integer Execution

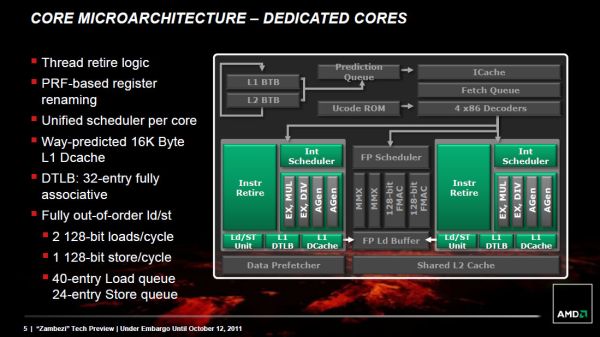

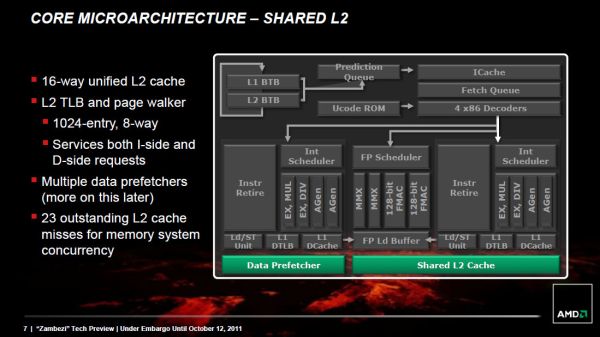

Each Bulldozer module features two fully independent integer cores. Each core has its own integer scheduler, register file and 16KB L1 data cache. The integer schedulers are both larger than their counterparts in the Phenom II.

The biggest change here is each integer core now has two ports instead of three. A single integer core features two AGU/ALU ports, compared to three in the previous design. AMD claims the third ALU/AGU pair went mostly unused in Phenom II, and as a result it's been removed from Bulldozer.

With larger structures feeding into the integer cores, AMD should be able to have an easier time of making use of the integer units than in previous designs. AMD could, in theory, execute more integer operations per core in Phenom II however AMD claims the architecture was typically bound elsewhere.

The Shared FP Core

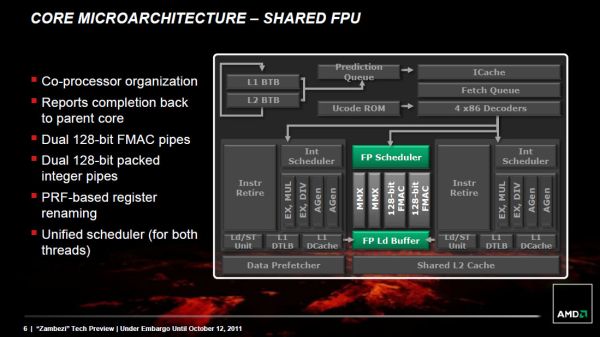

A single Bulldozer module has a single shared FP core for use by up to two threads. If there's only a single FP thread available, it is given full access to the FP execution hardware, otherwise the resources are shared between the two threads.

Compared to a quad-core Phenom II, AMD's eight-core (quad-module) FX sees no drop in floating point execution resources. AMD's architecture has always had independent scheduling for integer and floating point instructions, and we see the same number of execution ports between Phenom II cores and FX modules. Just as is the case with the integer cores, the shared FP core in a Bulldozer module has larger scheduling hardware in front of it than the FPU in Phenom II.

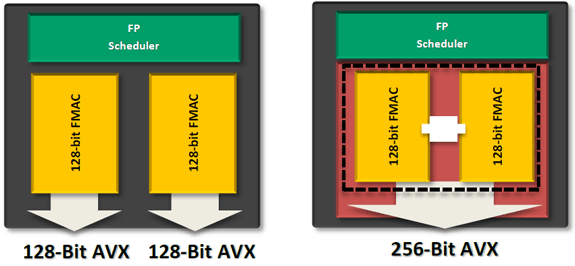

The problem is AMD had to increase the functionality of its FPU with the move to Bulldozer. The Phenom II architecture lacks SSE4 and AVX support, both of which were added in Bulldozer. Furthermore, AMD chose Bulldozer as the architecture to include support for fused multiply-add instructions (FMA). Enabling FMA support also increases the relative die area of the FPU. So while the throughput of Bulldozer's FPU hasn't increased over K8, its capabilities have. Unfortunately this means that peak FP throughput running x87/SSE2/3 workloads remains unchanged compared to the previous generation. Bulldozer will only be faster if newer SSE, AVX or FMA instructions are used, or if its clock speed is significantly higher than Phenom II.

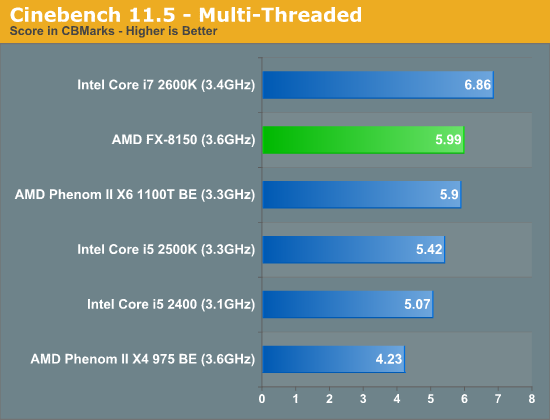

Looking at our Cinebench 11.5 multithreaded workload we see the perfect example of this performance shuffle:

Despite a 9% higher base clock speed (more if you include turbo core), a 3.6GHz 8-core Bulldozer is only able to outperform a 3.3GHz 6-core Phenom II by less than 2%. Heavily threaded floating point workloads may not see huge gains on Bulldozer compared to their 6-core predecessors.

There's another issue. Bulldozer, at least at launch, won't have to simply outperform its quad-core predecessor. It will need to do better than a six-core Phenom II. In this comparison unfortunately, the Phenom II has the definite throughput advantage. The Phenom II X6 can execute 50% more SSE2/3 and x87 FP instructions than a Bulldozer based FX.

Since the release of the Phenom II X6, AMD's major advantage has been in heavily threaded workloads—particularly floating point workloads thanks to the sheer number of resources available per chip. Bulldozer actually takes a step back in this regard and as a result, you will see some of those same workloads perform worse, if not the same as the outgoing Phenom II X6.

Compared to Sandy Bridge, Bulldozer only has two advantages in FP performance: FMA support and higher 128-bit AVX throughput. There's very little code available today that uses AMD's FMA instruction, while the 128-bit AVX advantage is tangible.

Cache Hierarchy and Memory Subsystem

Each integer core features its own dedicated L1 data cache. The shared FP core sends loads/stores through either of the integer cores, similar to how it works in Phenom II although there are two integer cores to deal with now instead of just one. Bulldozer enables fully out-of-order loads and stores, an improvement over Phenom II putting it on parity with current Intel architectures. The L1 instruction cache is shared by the entire bulldozer module, as is the L2 cache.

The instruction cache is a large 64KB 2-way set associative cache, similar in size to the Phenom II's L1 cache but obviously shared by more "cores". A four-core Phenom II would have 256KB of total L1 I-Cache, while a four core Bulldozer will have half of that. The L1 data caches are also significantly smaller than Bulldozer's predecessor. While Phenom II offered a 64KB L1 D-Cache per core, Bulldozer only offers 16KB per integer core.

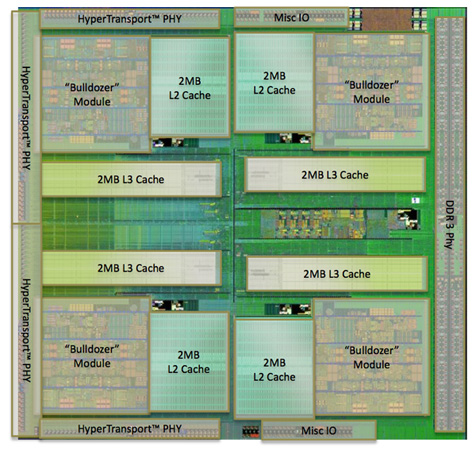

The L2 cache is much larger than what we saw in multi-core Phenom II designs however. Each Bulldozer module has a private 2MB L2 cache.

There's a single 8MB L3 cache that's shared among all Bulldozer modules on a chip. In its first incarnation, AMD has no plans to offer a desktop part without an L3 cache. However AMD indicated that the L3 cache was only really useful in server workloads and we might expect future Bulldozer derivatives (ahem, Trinity?) to forgo the L3 cache entirely as a result.

Cache accesses require more clocks in Bulldozer, due to a combination of size and AMD's desire to make Bulldozer a very high clock speed part...

430 Comments

View All Comments

Ryan Smith - Wednesday, October 12, 2011 - link

Good point. Fixed.Marburg U - Wednesday, October 12, 2011 - link

they have a bloating cache with something wrong insidehttp://www.xbitlabs.com/images/cpu/amd-fx-8150/t5....

npp - Wednesday, October 12, 2011 - link

Sun went an even more extreme route regarding FP performance on its Niagara CPUs - as far as I remember, the first generation chip had a single FPU shared across eight cores. Performance was not even close to a dual-core Core 2 Duo at that time. So that was what I though when I first read about the "module" approach in Bulldozer maybe an year ago - man, this must be geared towards server workloads primary, it will suffer on the desktop. I guess FPU count = core count would have be more appropriate for the FX line.hasu - Wednesday, October 12, 2011 - link

Would this be a good candidate for web server applications because of its excellent multi-threaded performance? How about to host a bunch of Virtual Machines?sep332 - Wednesday, October 12, 2011 - link

I've also been wondering if running a lot of VMs would work better on this CPU. But I don't really know how you'd benchmark that kind of thing. Time and total energy consumption to serve 20,000 web pages from 12 VMs?magnetik - Wednesday, October 12, 2011 - link

I've been waiting for this moment for months and months.Reading the whole thing now...

themossie - Wednesday, October 12, 2011 - link

This processor is worse than the Phenom II X6 for most of my workloads. My next machine will be Sandy/Ivy Bridge.But... we haven't seen this clock ramp up yet. As Anand mentions on page 3 - Remember the initial Pentium 4s? The Williamette 1.4 and 1.5 ghz processors were clearly worse than the competition, to say nothing of the PIII line. In time the P4 consistently beat the much higher IPC AMD processors on most workloads, especially after introducing Hyper-threading. This really does feel like a new Pentium IV! Trying a design based on clock speed and one-upping Intel's hyperthreading by calling 4 '1.5' cores 8 (we hyperthread your hyperthreading!) - it will be a wild ride.

At this point, I don't see anyone beating Intel at process shrink and they're a moving target. But competitive pricing, quick ramp up and a few large server wins can still save the day. Dream of crazy clockspeeds :-)

themossie - Wednesday, October 12, 2011 - link

Upon further reflection...- Expect to see Bulldozer targeted towards servers and consumers who think "8 cores" sounds sexy, at least until clockspeed ramps up.

- Processor performance is not the limiting factor for most consumer applications. AMD will push APUs very heavily, something they can beat Intel at. Piledriver should drive a good price/performance bargain for OEMs, and for laptops may have idle power consumption in shouting distance of Sandy Bridge.

I'm more optimistic about AMD now. But my next machine will still be Sandy Bridge / Ivy Bridge.

wolfman3k5 - Wednesday, October 12, 2011 - link

I see people that say that they'll be waiting for Piledriver. Why not wait for AMD Drillbit, or AMD Dremel? How about AMD Screwdriver or AMD Nailpuller? Tomorrow my 2600K arrives. I'm done. I had a build ready with a ASUS 990FX ready for Bulldozer, but I will "bulldoze" the part back to NewEgg.I must admit, I was worried when I saw the large amounts of L2 cache before the launch. AMD engineers must have been taking the summer off, and decided to throw more cache at the problem. AMD needs a new engineering team. Why the hell can Intel get it right and they can't?

AMD, your CPU engineers are lazy and incompetent. I mean, it only took you "only" four years to get your own version of the Pentium 4.

The bottom line is that its time to fire your lazy retarded and incompetent engineers, and scout for some talent. That's what every other company does that wants to succeed, regardless of the industry. I mean, look at KIA and Hyundai for example, they went out and hired the best designers from Audi and the best engineers they could buy with money. Throw some more money at the problem AMD and solve your problems. And if those lazy fat fucks in Texas that you call engineers don't deliver, look somewhere else. Israel or Russia maybe? Who knows... Just my 2 cents.

IKeelU - Wednesday, October 12, 2011 - link

I know nothing of AMD employee's work ethic, but...their problems may have nothing to do with raw technical talent. But you are right about one thing - throwing money at a problem can be helpful, and that's likely why Intel has succeeded for so long. Intel has a lot of cash, and a lot of assets (such as equipment). They can afford the best design/debugging tools (whether they buy'em or make'em), which makes it much easier to develop a top product given the same amount of microchip engineering talent.And just because they're based in Texas doesn't mean their staff is all-American. Like most US tech firms, quite a bit of their talent was probably imported.