The Bulldozer Aftermath: Delving Even Deeper

by Johan De Gelas on May 30, 2012 1:15 AM ESTIt has been months since AMD's Bulldozer architecture surprised the hardware enthusiast community with performance all over the place. The opinions vary wildly from “server benchmarks are here, and they're a catastrophe” to “Best Server Processor of 2011”. The least you can say is that the idiosyncrasies of AMD's latest CPU architecture have stirred up a lot of dust.

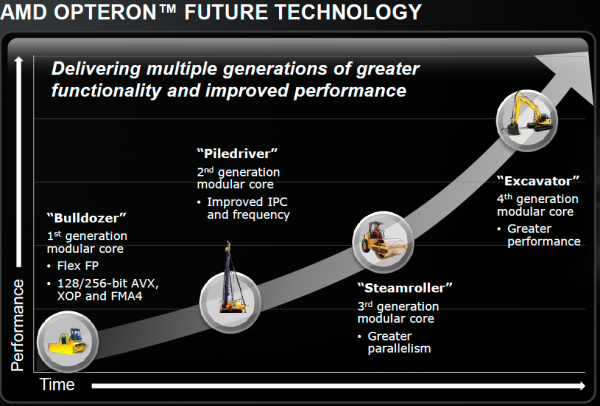

Now that the dust has settled, the Bulldozer chips now account for more than half of Opteron shipments and revenues. Since AMD's Financial Analyst Day (February 2, 2012), we have new code names: the improved Bulldozer architecture "Piledriver" will power the "Abu Dhabi" chip, a replacement for the current top server chip "Interlagos". AMD is clearly committed to the new "Bulldozer" direction: fitting as many cores as possible into a certain power envelope to improve thread throughput, while trying to "hold the line" on single-threaded performance.

In theory, the new 16-core Interlagos should have offered somewhere around a 33% boost in most highly-threaded applications. The reality is unfortunately not that rosy: in many highly-threaded server applications such as OLAP databases and virtualization, the new Opteron 6200 fails to impress and is only a few percent faster than it's older brother the 12-core Magny-Cours. There are even times where the older Opteron is faster.

Some, including sources inside AMD, have blamed Global Foundries for not delivering higher clocked SKUs. Sure, the clock speed targets for Interlagos were probably closer to 3GHz instead of 2.3GHz. But that does not explain why the extra integer cores do not deliver. We were promised up to 50% higher performance thanks to the 33% extra cores, but we got 20% at the most.

The combination of low single-threaded performance, the failure to really outperform the previous generation in highly-threaded applications, the relatively high power consumption at full load, and the fact that the CPU is designed for high clock speeds gives a lot of people a certain sense of Déjà vu: is this AMD's version of the Pentum 4 ?

One of our readers, "Iketh", spoke up and voiced the opinion of many of our readers:

" Unfortunately, the thought still in the back of my mind while reading was why did AMD reinvent the Pentium 4? I just don't get it."

Another reader nicknamed "Clagmaster" commented:

"A core this complex in my opinion has not been optimized to its fullest potential. Expect better performance when AMD introduces later steppings of this core with regard to power consumption and higher clock frequencies."

Although there have already been quite a few attempts to understand what Bulldozer is all about, we cannot help but not feel that many questions are still unanswered. Since this architecture is the foundation of AMD's server, workstation, and notebook future (Trinity is based on the improved Bulldozer core with the codename "Piledriver"), it is interesting enough to dig a little deeper. Did AMD take a wrong turn with this architecture? And if not, can the first implementation "Bulldozer" be fixed relatively easily?

We decided to delve deeper into the SAP and SPEC CPU2006 results, as well as profiling our own benchmarks. Using the profiling data and correlating it with what we know about AMD's Bulldozer and Intel's Sandy Bridge, we attempt to solve the puzzle.

84 Comments

View All Comments

Zoomer - Thursday, May 31, 2012 - link

True. It's probably better out way back then, but synthesized, than to come out maybe next year with all their lovingly fully customized, hand placed transistors. That's if they don't go bankrupt first.wolfman3k5's probably going to call nVidia, 3dfx, ATi (then), most FPGA program design houses, etc, lazy, too.

misiu_mp - Monday, June 11, 2012 - link

A large margin of error means that you have a lot of space to make errors with little consequence.You meant of course that engineers have small margins of error in their work.

500MM - Wednesday, May 30, 2012 - link

http://images.anandtech.com/graphs/graph5057/42770...If lower was better, AMD would have one kickass CPU. The caption is wrong.

JohanAnandtech - Friday, June 1, 2012 - link

Fixed, thx!weebnuts - Wednesday, May 30, 2012 - link

The problem with all these benchmarks is that most organizations are going to be using this is Xen or Vmware uses. The idea is that with more cores, you can run more VM's especially if you are trying to implement Virtual Desktops. How do the processors compare when you are loading the server to 80-90% capacity with lots of VM's? That's a real world comparison I want to see.Iketh - Wednesday, May 30, 2012 - link

I was dying for information like this. Thank you!And as for that quote on the first page by Iketh, that guy is a genious!! :D

Aone - Thursday, May 31, 2012 - link

1) Maybe i missed something but, Should "Higher is better" be for "Data Cache hitrate", i.e. opposite to cache misses?2) And on the chart "L2 Cache hitrate", is it correct that "Opteron 6276" tag is shown on first line while "Opteron 6174" on the last line? I thought Opteron 6174 was faster in MS SQL than Opteron 6276.

mrdcook - Thursday, May 31, 2012 - link

There are a few new instructions in Bulldozer's architecture that, for certain specific computations, can make it 10X faster than Intel. For example, FMA. An FMA does a multiply and then an add as one instruction, rounding only once. Combining the multiply and the add isn't such a big deal (and in many cases can even be counter-productive), but rounding only once is very important in some cases.For example, assume you have 3 digits of accuracy and want to calculate (1.23 * 2.31 - 2.84). Without FMA, you calculate Round(1.23 * 2.31) = 2.84, then you calculate Round(2.84 - 2.84) = 0. With FMA, you calculate 1.23 * 2.31 = 2.8413, then you calculate Round(2.8413 - 2.84) = 0.0013. While that may seem contrived (it was!), the difference is significant in certain simulations and calculations.

When doing math, computers have a very specific level of accuracy -- a certain number of digits of precision. If you want your simulations to come out right, you have to take these limits into account. Learning how to account for the computer's rounding errors is a bit of a black art.

Mathematicians design algorithms in terms of matrix multiplications and dot products, and if you translate those algorithms directly into computer multiplications and additions, you tend to end up with a lot of cancellation errors like the example given above. You can hire a computer science grad student to rework your algorithm to not lose accuracy, but that is expensive and has to be done for every new algorithm. Or you can use an FMA for the dot products and the matrix multiplications (the high-accuracy dot product and matrix multiplication libraries already do this).

FMA in software is slow. Single-precision emulated FMA isn't too bad since you can use double-precision to help with the hardest bits of the emulation. The result is that you can do one fmaf in about 4X the amount of time it would take to do a single a*b+c. However, SSE2 allows you to do 4 a*b+c at a time, so emulated single-precision FMA ends up being about 15X slower than optimized SSE2 non-fused multiply-add. Double-precision is harder, taking about 10 times longer than a single a*b+c, so it ends up being 20X slower than non-fused multiply-add.

Admittedly, the target market for FMA is probably smaller than a breadbox, but those who need it really need it. And as it becomes more common, it'll only become more important. For now, since only Bulldozer has it, nobody is going to care.

BaronMatrix - Thursday, May 31, 2012 - link

There are admittedly only two viable X86 licensees in America and one of them sucks...shodanshok - Thursday, May 31, 2012 - link

Hi Johan,first of all, let me thank you for your wonderful analysis on Bulldozer architecture. I read it with great interest.

However, I think that you left out a very important thing to mention: L1/L2 cache read/write bandwidth. Especially for L2, while latency is an important thing, throughput can be an even more crucial one.

The key point is that Bulldozer has an write-through L1 cache, so all L1 writes are more or less immediately broadcasted to L2 cache. Some small writes can be effectively cached inside a write-back combining buffer called Write Combining Cache (WCC), but this cache is only 4KB in size per the entire module. So, streaming writes will immediatly fill the WCC and bring down L1 cache speed to L2 levels.

This can really hamper CPU performance. Obviously, AMD went this road for some understandable reasons, however, the WCC is really too small to cache much data and the L2 is way too slow to efficiently serve L1 write requests.

This bring us to another point: L2 cache is slow. Comparing this with the super-fast (but much smaller) L2 Intel cache, it has no hope; it is more or less at Intel's L3 level.

Here you can find my analysis of AMD Bulldozer architecture: http://www.ilsistemista.net/index.php/hardware-ana...">AMD Bulldozer analysis

Note that, while I collected and normalized data from multiple web site, I left very clear what was the original reference (so that you can easily verify my data).

Thanks.