Seagate Business Storage 8-Bay 32TB Rackmount NAS Review

by Ganesh T S on March 14, 2014 6:00 AM EST- Posted in

- NAS

- IT Computing

- Seagate

- Enterprise

Single Client Performance - CIFS and iSCSI on Windows

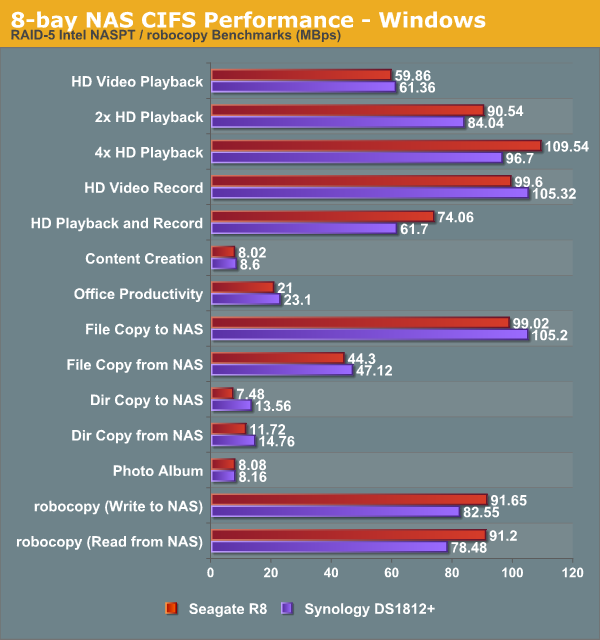

The single client CIFS performance of the Seagate Business Storage 8-Bay Rackmount was evaluated on the Windows platforms using Intel NASPT and our standard robocopy benchmark. This was run from one of the virtual machines in our NAS testbed. All data for the robocopy benchmark on the client side was put in a RAM disk (created using OSFMount) to ensure that the client's storage system shortcomings wouldn't affect the benchmark results. It must be noted that all the shares / iSCSI LUNs are created in a RAID-5 volume.

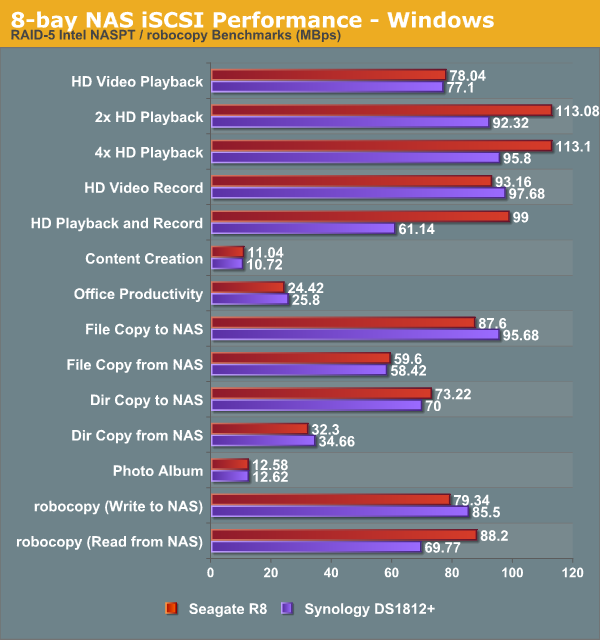

We created a 250 GB iSCSI target and mapped it on the Windows VM. The same benchmarks were run and the results are presented below.

It is interesting to note that the Atom D27xx-based Synology DS1812+ runs neck-to-neck with the Seagate R8, but the extra CPU grunt of the Celeron G1610T helps the Seagate unit march ahead in a number of benchmarks.

Encryption Support

The Seagate NAS unit, unfortunately, doesn't have native encryption support. Even though the Celeron G1610T doesn't support AES-NI, software-based enryption would have definitely yielded better results compared to what is obtained from Atom-based units. In any case, Seagate believes that using SEDs (Self-Encrypting Drives) for the disks would be a better option for customers with no impact at all to the NAS performance. Even though none of the SEDs are currently in the public compatibility list, Seagate says they do support them.

Under usual circumstances, we would not have liked the limiting of consumer options (since SEDs definitely cost a bit more compared to the non-encrypted versions). However, given that the HDD vendor and the NAS vendor are both the same for this product, it wouldn't be too much of a drawback. In essence, if the user / company is looking for a diskless enclosure, there are multiple options available with a better feature set. We believe the allure of these units stem from the fact that the disk and NAS vendor are one and the same, allowing for better support and lesser overhead for the IT department.

28 Comments

View All Comments

phoenix_rizzen - Friday, March 14, 2014 - link

9U for 50 2.5" drives? Something's not right with that.You can get 24 2.5" drives into a single 2U chassis (all on the front, slotted vertical). So, if you go to 4U, you can get 48 2.4" drives into the front of the chassis, with room on the back for even more.

Supermicro's SC417 4U chassis holds 72 2.5" drives (with motherboard) or 88 (without motherboard).

http://www.supermicro.com/products/chassis/4U/?chs...

Shoot, you can get 45 full-sized 3.5" drives into a 4U chassis from SuperMicro using the SC416 chassis. 9U for 50 mini-drives is insane!

jasonelmore - Saturday, March 15, 2014 - link

all HDD's have heliumddriver - Friday, March 14, 2014 - link

LOL, it is almost as fast as a single mechanical drive. At that price - a giant joke. You need that much space with such slow access - this doesn't even qualify for indie professional workstations, much less for the enterprise. With 8 drives in raid 5 you'd think it will perform at least twice as well as it does.FunBunny2 - Friday, March 14, 2014 - link

Well, as a short-stroked RAID 10 device, you might be able to get 4TB of SSD speed. With drives of decent reliability, not necessarily the Seagates, you get more TB/$/time than some enterprise SSD. Someone could do the arithmetic?shodanshok - Friday, March 14, 2014 - link

Mmm, no, SSD speed are too much away.Even only considering rotational delay and entirely discarding seek time (eg: an extemed short-stroked disk), disk access time remain much higher then SSD. A 15k enterprise class drive need ~4ms to complete a platter rotation, with an average rotational delay of ~2ms. Considering that you can not really cancel seek time, the resulting access latency of even short-stroked disk surely is above 5ms.

And 15k drives cost much more that consumer drives.

A simple consumer-level MLC disk (eg: Crucial M500) has a read access latency way lower than 0.05 ms. Write access latency is surely higher, but way better than HD one.

So: SSDs completely eclipse HDDs on the performance front. Moreover, with high capacity (~1TB) with higher-grade consumer level / entry-level enterprise class SSDs with power failure protection (eg: Crucial M500, Intel DC S3500) you can build a powerfull array at reasonable cost.

ddriver - Sunday, March 16, 2014 - link

I think he means sequential speed. You need big storage for backup or highly sequential data like raw audio/video/whatever, you will not put random read/write data on such storage. That much capacity needs high sequential speeds. Even if you store databases on that storage, the frequently accessed sets will be cached, and overall access will be buffered.SSD sequential performance today is pretty much limited by the controller speed to about ~530 mb/sec. A 1TB WD raptor drive does over 200 mb/sec in its fastest region, so I imagine that 4 of those would be able to hit SSD speed at tremendously higher capacity and even more so volume to price ratio.

shodanshok - Friday, March 14, 2014 - link

This thing seems too expensive to me. I mean, if the custom linux based OS has the limitations explained in the (very nice!) article, it is better to use a general purpose distro and simply manage all via LVM. Or even use a storage-centric distribution (eg: freenas, unraid) and simply buy a general-purpose PC/server with many disks...M/2 - Friday, March 14, 2014 - link

$5100 ??? I could buy a Mac mini or a Mac Pro and a Promise2 RAID for less than that! ....and have Gigabit speedsazazel1024 - Friday, March 14, 2014 - link

I have a hard time wrapping my head around the price.Other than the ECC RAM, that is VERY close to my server setup (same CPU for example). Except mine also has a couple of USB3 ports, twice the USB 2 ports, a third GbE NIC (the onboard) and double the RAM.

Well...it can't take 8 drives without an add on card, as it only has 6 ports...but that isn't too expensive.

Total cost of building...less than $300.

I can't fathom basically $300 of equipment being upsold for 10x the price! Even an upsale on the drives in it doesn't seem justified to get it in to that range of price.

Heck, you could get a RAID card and do 7 drives in RAID5/6 for redundancy and use commercial 4TB drives with an SSD as a cache drive and a REALLY nice RAID card in to my system, and you'd probably come out at less than half the price and probably with better performance.

I get building your own is almost always cheaper, but a $3000 discount is just a we bit cheaper on a $5000 hardware price tag, official support or no official support.

azazel1024 - Friday, March 14, 2014 - link

I might also add, looking at the power consumption figures, with my system being near identical, other than lack of ECC memory, but more RAM, more networking connectivity and WITH disks in it, mine consumes 14w less at idle (21w idle). The RAID rebuild figures on 1-2 disks and 2-3 is also a fair amount lower on my server, but more than 10w difference (mine has 2x2TB RAID0 right now and a 60GB SSD as boot drive).Also WAY more networking performance. I don't know if the OS doesn't support SMB3.0, or if Anandtech isn't running any network testing with SMB3.0 utilized, but with Windows 8 on my server, I am pushing 2x1GbE to the max, or at least I was when my desktop RAID array was less full (need new array, 80% utilized on my desktop right now as it is only 2x1TB RAID0).

Even looking at some of the below GbE saturation benchmarks, I am pushing a fair amount more data over my links than the Seagate NAS here is.

With better disks in my server and desktop I could easily patch in the 3rd GbE NIC in the machine to push up over 240MB/sec over the links to the limit of what the drives can do. I realize a lot of SOHO/SMB implementations are about concurrent users and less about maximum throughput, but the beauty of SMB3.0 and SMB Multichannel is...it does both. No limits on per link speed, you can saturate all of the links for a single user or push multiple users through too.

I've done RAM disk testing with 3 links enabled and SMB Multichannel enabled and saw duplex 332MB/sec Rx AND Tx between my server and desktop. I just don't have the current array to support that, so I leave only the Intel NICs enabled and leave the on-board NICs on the machines disabled.