ASRock X99 WS-E/10G Motherboard Review: Dual 10GBase-T for Prosumers

by Ian Cutress on December 15, 2014 10:00 AM EST- Posted in

- Motherboards

- IT Computing

- Intel

- ASRock

- Enterprise

- X99

- 10GBase-T

Gaming Performance

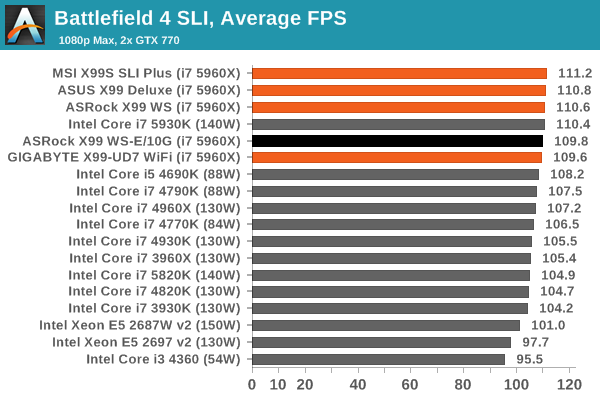

An interesting point to consider is the addition of the PLX8747 chips to allow for x16/x16/x16/x16 PCIe layouts. In previous reviews we have noted that these chips barely reduce the frame rate if at all, however one could suggest that as the X99 WS-E/10G uses two of them (and we split our SLI testing cards between them), there might be more room for additional delays.

Ultimately however our results were within the same range as the other X99 motherboards, suggesting that PCIe bandwidth on this scale (1080p, max settings) is not affected. It would be interesting to see a three or four-way SLI setup powering several 4K monitors.

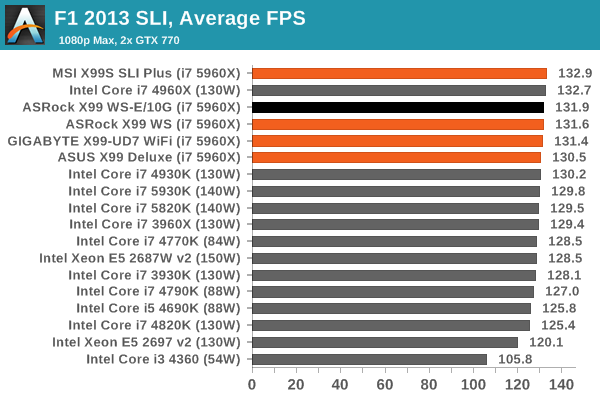

F1 2013

First up is F1 2013 by Codemasters. I am a big Formula 1 fan in my spare time, and nothing makes me happier than carving up the field in a Caterham, waving to the Red Bulls as I drive by (because I play on easy and take shortcuts). F1 2013 uses the EGO Engine, and like other Codemasters games ends up being very playable on old hardware quite easily. In order to beef up the benchmark a bit, we devised the following scenario for the benchmark mode: one lap of Spa-Francorchamps in the heavy wet, the benchmark follows Jenson Button in the McLaren who starts on the grid in 22nd place, with the field made up of 11 Williams cars, 5 Marussia and 5 Caterham in that order. This puts emphasis on the CPU to handle the AI in the wet, and allows for a good amount of overtaking during the automated benchmark. We test at 1920x1080 on Ultra graphical settings.

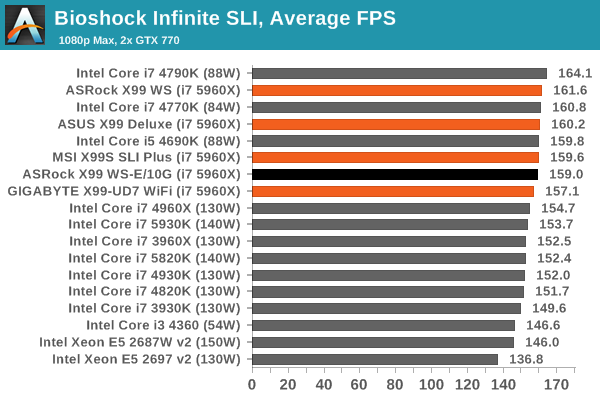

Bioshock Infinite

Bioshock Infinite was Zero Punctuation’s Game of the Year for 2013, uses the Unreal Engine 3, and is designed to scale with both cores and graphical prowess. We test the benchmark using the Adrenaline benchmark tool and the Xtreme (1920x1080, Maximum) performance setting, noting down the average frame rates and the minimum frame rates.

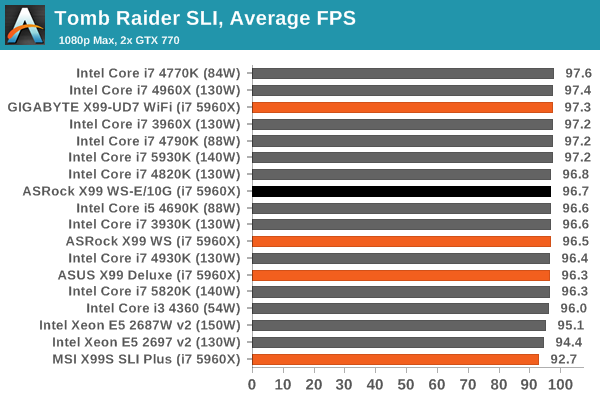

Tomb Raider

The next benchmark in our test is Tomb Raider. Tomb Raider is an AMD optimized game, lauded for its use of TressFX creating dynamic hair to increase the immersion in game. Tomb Raider uses a modified version of the Crystal Engine, and enjoys raw horsepower. We test the benchmark using the Adrenaline benchmark tool and the Xtreme (1920x1080, Maximum) performance setting, noting down the average frame rates and the minimum frame rates.

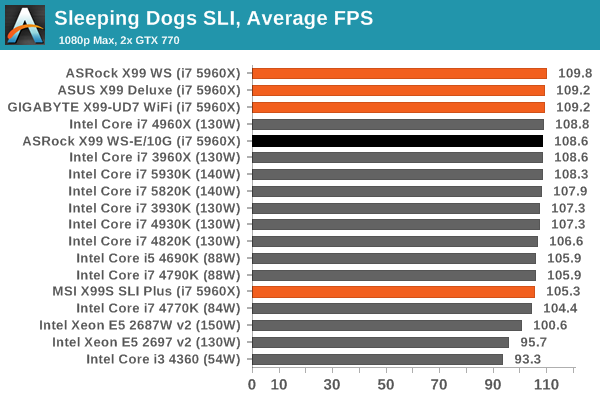

Sleeping Dogs

Sleeping Dogs is a benchmarking wet dream – a highly complex benchmark that can bring the toughest setup and high resolutions down into single figures. Having an extreme SSAO setting can do that, but at the right settings Sleeping Dogs is highly playable and enjoyable. We run the basic benchmark program laid out in the Adrenaline benchmark tool, and the Xtreme (1920x1080, Maximum) performance setting, noting down the average frame rates and the minimum frame rates.

Battlefield 4

The EA/DICE series that has taken countless hours of my life away is back for another iteration, using the Frostbite 3 engine. AMD is also piling its resources into BF4 with the new Mantle API for developers, designed to cut the time required for the CPU to dispatch commands to the graphical sub-system. For our test we use the in-game benchmarking tools and record the frame time for the first ~70 seconds of the Tashgar single player mission, which is an on-rails generation of and rendering of objects and textures. We test at 1920x1080 at Ultra settings.

45 Comments

View All Comments

gsvelto - Tuesday, December 16, 2014 - link

Where I worked we had extensive 10G SFP+ deployments with ping latency measured in single-digit µs. The latency numbers you gave are for pure-throughput oriented, low CPU overhead transfers and are obviously unacceptable if your applications are latency sensitive. Obtaining those numbers usually requires tweaking your power-scaling/idle governors as well as kernel offloads. The benefits you get are very significant on a number of loads (e.g. lots of small file over NFS for example) and 10GBase-T can be a lot slower on those workloads. But as I mentioned in my previous post 10GBase-T is not only slower, it's also more expensive, more power hungry and has a minimum physical transfer size of 400 bytes. So if you're load is composed of small packets and you don't have the luxury of aggregating them (because latency matters) then your maximum achievable bandwidth is greatly diminished.shodanshok - Wednesday, December 17, 2014 - link

Sure, packet size play a far bigger role for 10GBase-T then optical (or even copper) SFP+ links.Anyway, the pings tried before were for relatively small IP packets (physical size = 84 bytes), which are way lower then typical packet size.

For message-passing workloads SFP+ is surely a better fit, but for MPI it is generally better to use more latency-oriented protocol stacks (if I don't go wrong, Infiniband use a lightweight protocol stack for this very reason).

Regards.

T2k - Monday, December 15, 2014 - link

Nonsense. CAT6a or even CAT6 would work just fine.Daniel Egger - Monday, December 15, 2014 - link

You're missing the point. Sure Cat.6a would be sufficient (it's hard to find Cat.7 sockets anyway but the cabling used nowadays is mostly Cat.7 specced, not Cat.6a) but the problem is to end up with a properly balanced wiring that is capable of properly establishing such a link. Also copper cabling deteriorates over time so the measurement protocol might not be worth snitch by the time you try to establish a 10GBase-T connection...Cat.6 is only usable with special qualification (TIA-155-A) over short distances.

DCide - Tuesday, December 16, 2014 - link

I don't think T2k's missing the point at all. Those cables will work fine - especially for the target market for this board.You also had a number of other objections a few weeks ago, when this board was announced. Thankfully most of those have already been answered in the excellent posts here. It's indeed quite possible (and practical) to use the full 10GBase-T bandwidth right now, whether making a single transfer between two machines or serving multiple clients. At the time you said this was *very* difficult, implying no one will be able to take advantage of it. Fortunately, ASRock engineers understood the (very attainable) potential better than this. Hopefully now the market will embrace it, and we'll see more boards like this. Then we'll once again see network speeds that can keep up with everyday storage media (at least for a while).

shodanshok - Tuesday, December 16, 2014 - link

You are right, but the familiar RJ45 & cables can be a strong motivation to go with 10GBase-T in some cases. For a quick example: one of our customer bought two Dell 720xd to use as virtualization boxes. The first R720xd is the active one, while the second 720xd is used as hot-standby being constantly synchronized using DRBD. The two boxes are directly connected with a simple Cat 6e cable.As the final customer was in charge to do both the physical installation and the normal hardware maintenance, a familiar networking equipment as RJ45 port and cables were strongly favored by him.

Moreover, it is expected that within 2 die shrinks 10GBase-T controller become cheap/low power enough that they can be integrated pervasively, similar to how 1GBase-T replaced the old 100 Mb standard.

Regards.

DigitalFreak - Monday, December 15, 2014 - link

Don't know why the went with 8 PCI-E lanes for the 10Gig controller. 4 would have been plenty.1 PCI-E 3.0 lane is 1GB per second (x4 = 4GB). 10Gig max is 1.25 GB per second, dual port = 2.5 GB per second. Even with overhead you'd still never saturate an x4 link. Could have used the extra x4 for something else.

The Melon - Monday, December 15, 2014 - link

I personally think it would be a perfect board if they replaced the Intel X540 controller with a Mellanox ConnectX-3 dual QSFP solution so we could choose between FDR IB and 40/10/1Gb Ethernet per port.Either that or simply a version with the same slot layout and drop the Intel X540 chip.

Bottom line though is no matter how they lay it out we will find something to complain about.

Ian Cutress - Tuesday, November 1, 2016 - link

The controller is PCIe 2.0, not PCIe 3.0. You need to use a PCIe 3.0 controller to get PCIe 3.0 speeds.eanazag - Monday, December 15, 2014 - link

I am assuming we are talking about the free ESXi Hypervisor in the test setup.SR-IOV (IOMMU) is not an enabled feature on ESXi with the free license. What this means is that networking is going to tax the CPU more heavily. Citrix Xenserver does support SR-IOV on the free product, which it is all free now - you just pay for support. This is a consideration to base the results of the testing methodology used here.

Another good way to test 10GbE is using iSCSI where the server side is a NAS and the single client is where the disk is attached. The iSCSI LUN (hard drive) needs have something going on with an SSD. It can just be 3 spindle HDDs in RAID 5. You can use disk test software to drive the benchmarking. If you opt to use Xenserver with Windows as the iSCSI client. Have the VM directly connect to the NAS instead of using Xenserver to the iSCSI LUN because you will hit a performance cap from VM to host in the typical add disk within Xen. This is in older 6.2 version. Creedance is not fully out of beta yet. I have done no testing on Creedance and the contained changes are significant to performance.

About two years ago I was working on coming up with the best iSCSI setup for VMs using HDDs in RAID and SSDs as caches. I was using Intel X540-T2's without a switch. I was working with Nexenta Stor and Sun/Oracle Solaris as iSCSI target servers run on physical hardware, Xen, and VMware. I encountered some interesting behavior in all cases. VMware's sub-storage yielded better hard drive performance. I kept running into an artifical performance limit because of the Windows client and how Xen handles the disks it provides. The recommendation was to add the iSCSI disk directly to the VM as the limit wouldn't show up there. VMware still imposed a performance ding on (Hit>10%) my setup. Physical hardware had the best performance for the NAS side.