AMD Discusses 2016 Radeon Visual Technologies Roadmap

by Ryan Smith on December 8, 2015 9:00 AM EST- Posted in

- GPUs

- Displays

- AMD

- Radeon

- DisplayPort

- HDMI

- Radeon Technologies Group

High Dynamic Range: Setting the Stage For The Next Generation

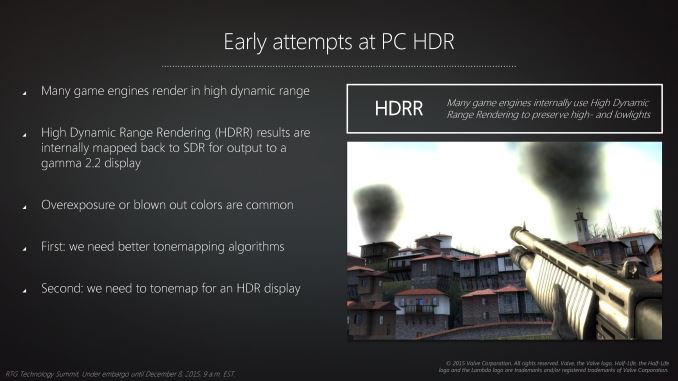

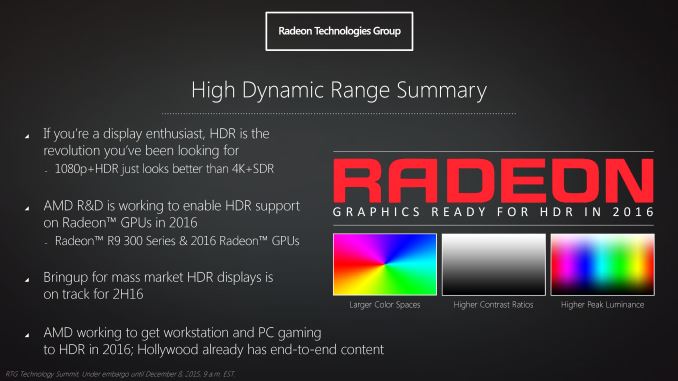

The final element of RTG’s visual technologies presentation was focused on high dynamic range (HDR). In the PC gaming space HDR rendering has been present in some form or another for almost 10 years. However it’s only recently that the larger consumer electronics industry has begun to focus on HDR, in large part due to recent technical and manufacturing scale achievements.

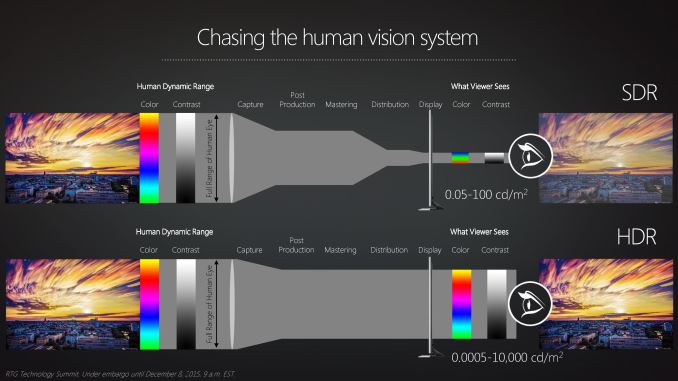

Though HDR is most traditionally defined with respect to the contrast ratio and the range of brightness within an image – and how the human eye can see a much wider range in brightness than current displays can reproduce – for RTG their focus on HDR is spread out over several technologies. This is due to the fact that to bring HDR to the PC one not only needs a display that can cover a wider range of brightness than today’s displays that top out at 300 nits or so, but there are also changes required in how color information needs to be stored and transmitted to a display, and really the overall colorspace used. As a result RTG’s HDR effort is an umbrella effort covering multiple display-related technologies that need to come together for HDR to work on the PC.

The first element of this – and the element least in RTG’s control – is the displays themselves. Front-to-back HDR requires having displays capable not only of a high contrast ratio, but also some sort of local lighting control mechanism to allow one part of the display to be exceptionally bright while another part is exceptionally dark. The two technologies in use to accomplish this are LCDs with local dimming (as opposed to a single backlight) or OLEDS, which are self-illuminating, both of which until recently had their own significant price premium. The price on these styles of displays is finally coming down, and there is hope that displays capable of hitting the necessary brightness, contrast, and dimming levels for solid HDR reproduction will become available within the next year.

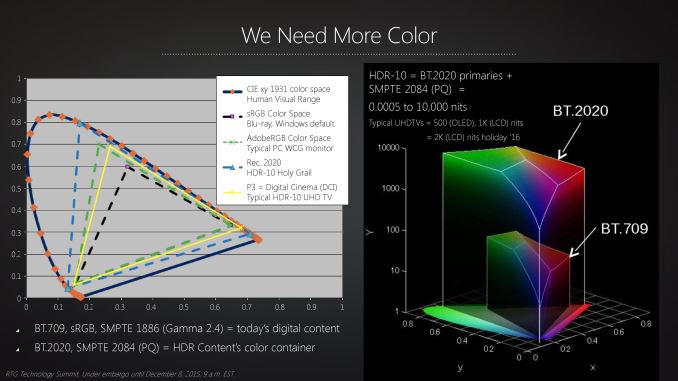

As for RTG’s own technology is concerned, even after HDR displays are on the market, RTG needs to make changes to support these displays. The traditional sRGB color space is not suitable for true HDR – it just isn’t large enough to correctly represent colors at the extreme ends of the brightness curve – and as a result RTG is laying the groundwork for improved support for larger color spaces. The company already supports AdobeRGB for professional graphics work, however the long-term goal is to support the BT.2020 color space, which is the space the consumer electronics industry has settled upon for HDR content. BT.2020 will be what 4K Blu Rays will be mastered in, and in time it is likely that other content will follow.

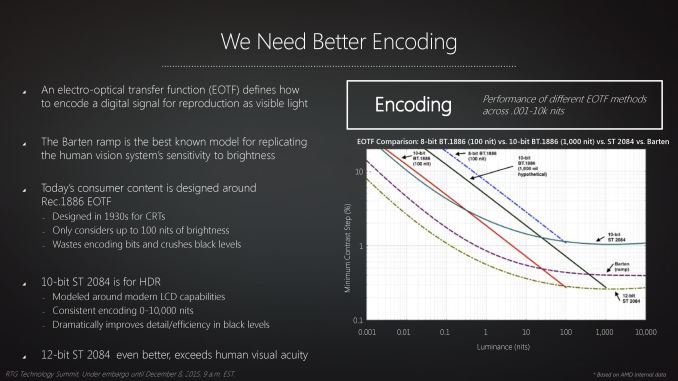

Going hand-in-hand with the BT.2020 color space is how it’s represented. While it’s technically possible to display the color space using today’s 8 bit per color (24bpp) encoding schemes, the larger color space would expose and exacerbate the banding that results from only having 256 shades of any given primary color to work with. As a result BT.2020 also calls for increasing the bit depth of images from 8bpc to a minimum of 10bpc (30bpp), which serves to increase the number of shades of each primary color to 1024. Only by both increasing the color space and at the same time increasing the accuracy within that space can the display rendering chain accurately describe an HDR image, ultimately feeding that to an HDR-capable display.

The good news here for RTG (and the PC industry as a whole) is that 10 bit per color rendering is already done on the PC, albeit traditionally limited to professional grade applications and video cards. BT.2020 and the overall goals of the consumer electronics industry means that 10 bit per color and BT.2020's specific curve will need to become a consumer feature, and this is where RTG’s HDR presentation lays out their capabilities and goals.

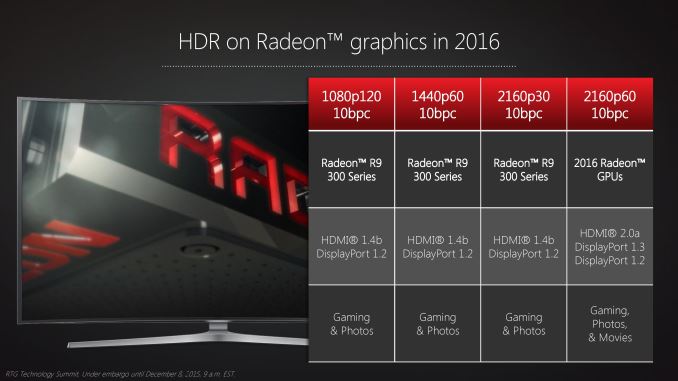

The Radeon 300 series is already capable of 10bpc rendering, so even older cards if presented with a suitable monitor will be capable of driving HDR content over HDMI 1.4b and DisplayPort 1.2. The higher bit depth does require more bandwidth, and as a result it’s not possible to combine HDR, 4K, and 60Hz with any 300 series cards due to the limitations of DisplayPort 1.2 (though lower resolutions with higher refresh rates are possible). However this means that the 2016 Radeon GPUs with DisplayPort 1.3 would be able to support HDR at 4K@60Hz.

And indeed it’s likely the 2016 GPUs where HDR will really take off. Although RTG can support all of the basic technical aspects of HDR on the Radeon 300 series, there’s one thing none of these cards will ever be able to do, and that’s to directly support the HDCP 2.2 standard, which is being required for all 4K/HDR content. As a result only the 2016 GPUs can play back HDR movies, while all earlier GPUs would be limited to gaming and photos.

Meanwhile RTG is also working on the software side of matters as well in conjunction with Microsoft. At this time it’s possible for RTG to render to HDR, but only in an exclusive fullscreen context, bypassing the OS’s color management. Windows itself isn’t capable of HDR rendering, and this is something that Microsoft and its partners are coming together to solve. And it will ultimately be a solved issue, but it may take some time. Not unlike high-DPI rendering, edge cases such as properly handling mixed use of HDR/SDR are an important consideration that must be accounted for. And for that matter, the OS needs a means of reliably telling (or being told) when it has HDR content.

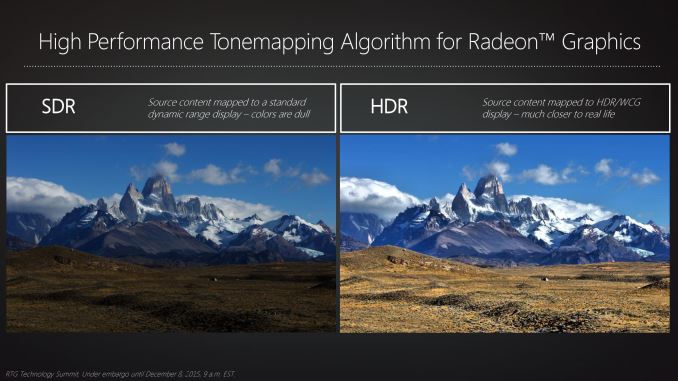

Finally at the other end of the spectrum will be software developers. While the movie/TV industries have already laid the groundwork for HDR production, software and game developers will be in a period of catching up as most current engines implicitly assume that they’ll be rendering for a SDR display. This means at a minimum reducing/removing the step in the rendering process where a scene is tonemapped for an SDR display, but there will also be some cases where rendering algorithms need to be changed entirely to make best use of the larger color space and greater dynamic range. RTG for their part seems to be eager to work with developers through their dev relations program to give them the tools they need (such as HDR tonemapping) to do just that.

Wrapping things up, RTG expects that we’ll start to see HDR capable displays in the mass market in 2016. At this point in time there is some doubt over whether this will include PC displays right away, in which case there may be a transition period of “EDR” displays that offer 10bpc and better contrast ratios than traditional LCDs, but can’t hit the 1000+ nit brightness that HDR really asks for. Though regardless of the display situation, AMD expects to be rolling out their formal support for HDR in 2016.

99 Comments

View All Comments

Michael Bay - Thursday, December 10, 2015 - link

He`s drunk or crazy. Typical state for AMD user.RussianSensation - Wednesday, December 23, 2015 - link

It's actually correct. GCN-like implies Pascal will be more oriented towards GPGPU/compute functions -- i.e., graphics cards are moving towards general purpose processing devices that are good at performing various parallel tasks well. GCN is just a marketing name but the main thing about it is focus on compute + graphics functionality. NV is re-focusing its efforts heavily on compute with Pascal. For example, they are aiming to increase neural network performance by 10X.extide - Tuesday, December 8, 2015 - link

While nVidia picks a new name for each generation it's not like they are tossing the old design in the trash and building an entirely new GPU ... I would imagine we will see "GCN 2.0" next year, and I would be surprised if better power efficiency was not one of the main features.Refuge - Tuesday, December 8, 2015 - link

That has been their trend for the last two Architecture updates they've made. Granted small adjustments, but all in the name of power and efficiency.Jon Irenicus - Tuesday, December 8, 2015 - link

Apparently in maxwell they got that power efficiency by stripping out a lot of the hardware schedules amd still has in gcn, so the efficiency boost and power decrease was not "free." It will mean that maxwell cards are less capable of context switching for VR, and can't handle mixed graphics/compute workloads as well as gcn cards. That was fine with dx11 and it worked well for them, but I don't think those cards will age well at all. But that may have been part of the point.haukionkannel - Tuesday, December 8, 2015 - link

They only need to upgrade some part of GCN and they are just fine!The Nvidia did very good job in compression architecture of their GPU and that lead much better energy usage because they can use smaller (and cheaper) memory pathway. (There are other factors too, but that one is guite important)

AMD have higher bandwidth version of their 380, but the card does not benefit from it, so they are not releasing it, because 380 also have relative good compression build in. Make it better, increase ROPs and GCN is competitive again.

WaltC - Tuesday, December 8, 2015 - link

Odd, considering that nVidia is very much in catch-up mode presently concerning HBM deployment and even D3d12 hardware compliance...;) But, I don't do mobile at all, so I can't see it from that *cough* perspective...Michael Bay - Thursday, December 10, 2015 - link

HBM does not offer any real advantage to the enduser presently, so there is literally no catch-up on nV part. Same with DX12.Macpoedel - Tuesday, December 8, 2015 - link

Nvidia changes the name of their architecture for every little change they make, that doesn't mean AMD has to do so as well. GCN 1.0 and GCN 1.2 are almost as much apart as Maxwell 2 and Kepler are. GPU architectures haven't changed all that much since both companies stated using the 28nm node.Frenetic Pony - Tuesday, December 8, 2015 - link

Supposedly next year will bring GCN 2.0 Also it's already confirmed that there's basically no architectural improvements from Nvidia next year. Pascal is almost identical to Maxwell in most ways except for a handful of compute features.