AMD Discusses 2016 Radeon Visual Technologies Roadmap

by Ryan Smith on December 8, 2015 9:00 AM EST- Posted in

- GPUs

- Displays

- AMD

- Radeon

- DisplayPort

- HDMI

- Radeon Technologies Group

High Dynamic Range: Setting the Stage For The Next Generation

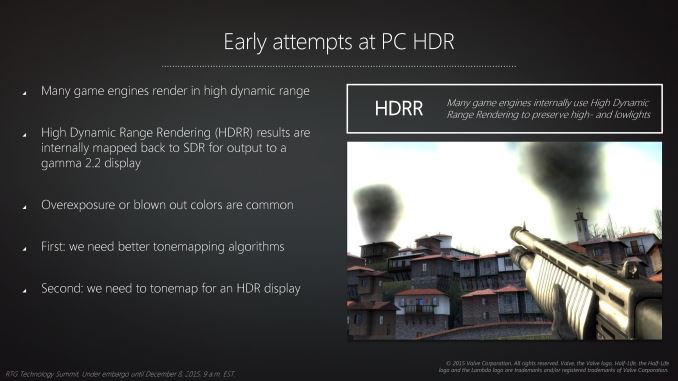

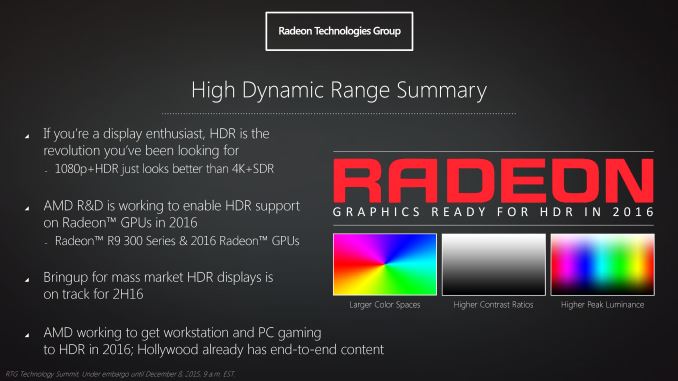

The final element of RTG’s visual technologies presentation was focused on high dynamic range (HDR). In the PC gaming space HDR rendering has been present in some form or another for almost 10 years. However it’s only recently that the larger consumer electronics industry has begun to focus on HDR, in large part due to recent technical and manufacturing scale achievements.

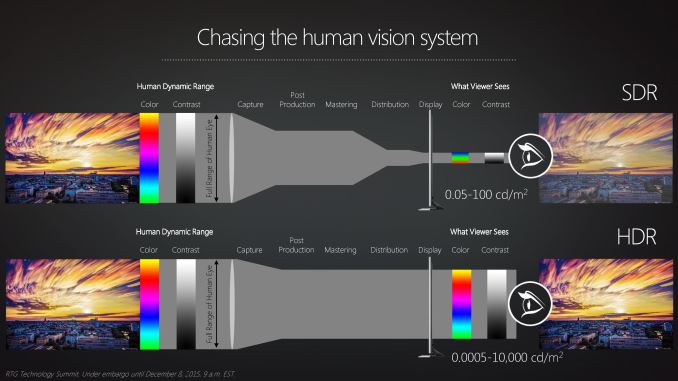

Though HDR is most traditionally defined with respect to the contrast ratio and the range of brightness within an image – and how the human eye can see a much wider range in brightness than current displays can reproduce – for RTG their focus on HDR is spread out over several technologies. This is due to the fact that to bring HDR to the PC one not only needs a display that can cover a wider range of brightness than today’s displays that top out at 300 nits or so, but there are also changes required in how color information needs to be stored and transmitted to a display, and really the overall colorspace used. As a result RTG’s HDR effort is an umbrella effort covering multiple display-related technologies that need to come together for HDR to work on the PC.

The first element of this – and the element least in RTG’s control – is the displays themselves. Front-to-back HDR requires having displays capable not only of a high contrast ratio, but also some sort of local lighting control mechanism to allow one part of the display to be exceptionally bright while another part is exceptionally dark. The two technologies in use to accomplish this are LCDs with local dimming (as opposed to a single backlight) or OLEDS, which are self-illuminating, both of which until recently had their own significant price premium. The price on these styles of displays is finally coming down, and there is hope that displays capable of hitting the necessary brightness, contrast, and dimming levels for solid HDR reproduction will become available within the next year.

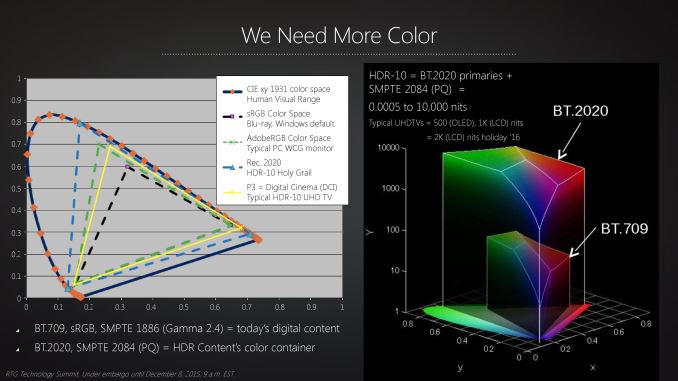

As for RTG’s own technology is concerned, even after HDR displays are on the market, RTG needs to make changes to support these displays. The traditional sRGB color space is not suitable for true HDR – it just isn’t large enough to correctly represent colors at the extreme ends of the brightness curve – and as a result RTG is laying the groundwork for improved support for larger color spaces. The company already supports AdobeRGB for professional graphics work, however the long-term goal is to support the BT.2020 color space, which is the space the consumer electronics industry has settled upon for HDR content. BT.2020 will be what 4K Blu Rays will be mastered in, and in time it is likely that other content will follow.

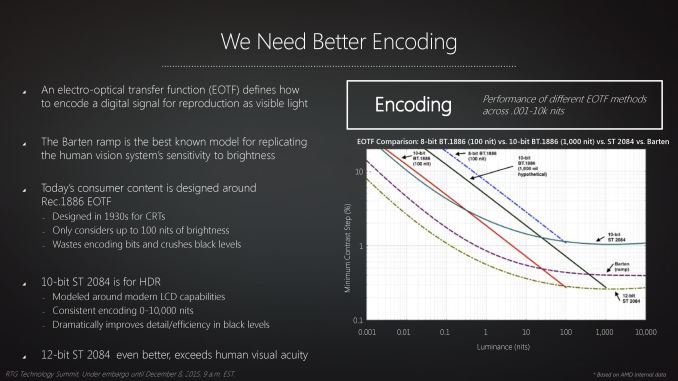

Going hand-in-hand with the BT.2020 color space is how it’s represented. While it’s technically possible to display the color space using today’s 8 bit per color (24bpp) encoding schemes, the larger color space would expose and exacerbate the banding that results from only having 256 shades of any given primary color to work with. As a result BT.2020 also calls for increasing the bit depth of images from 8bpc to a minimum of 10bpc (30bpp), which serves to increase the number of shades of each primary color to 1024. Only by both increasing the color space and at the same time increasing the accuracy within that space can the display rendering chain accurately describe an HDR image, ultimately feeding that to an HDR-capable display.

The good news here for RTG (and the PC industry as a whole) is that 10 bit per color rendering is already done on the PC, albeit traditionally limited to professional grade applications and video cards. BT.2020 and the overall goals of the consumer electronics industry means that 10 bit per color and BT.2020's specific curve will need to become a consumer feature, and this is where RTG’s HDR presentation lays out their capabilities and goals.

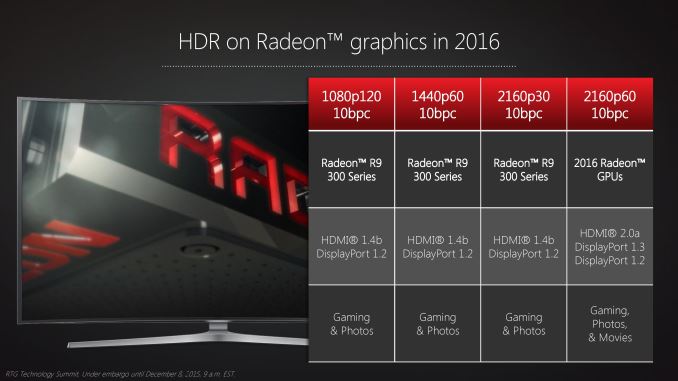

The Radeon 300 series is already capable of 10bpc rendering, so even older cards if presented with a suitable monitor will be capable of driving HDR content over HDMI 1.4b and DisplayPort 1.2. The higher bit depth does require more bandwidth, and as a result it’s not possible to combine HDR, 4K, and 60Hz with any 300 series cards due to the limitations of DisplayPort 1.2 (though lower resolutions with higher refresh rates are possible). However this means that the 2016 Radeon GPUs with DisplayPort 1.3 would be able to support HDR at 4K@60Hz.

And indeed it’s likely the 2016 GPUs where HDR will really take off. Although RTG can support all of the basic technical aspects of HDR on the Radeon 300 series, there’s one thing none of these cards will ever be able to do, and that’s to directly support the HDCP 2.2 standard, which is being required for all 4K/HDR content. As a result only the 2016 GPUs can play back HDR movies, while all earlier GPUs would be limited to gaming and photos.

Meanwhile RTG is also working on the software side of matters as well in conjunction with Microsoft. At this time it’s possible for RTG to render to HDR, but only in an exclusive fullscreen context, bypassing the OS’s color management. Windows itself isn’t capable of HDR rendering, and this is something that Microsoft and its partners are coming together to solve. And it will ultimately be a solved issue, but it may take some time. Not unlike high-DPI rendering, edge cases such as properly handling mixed use of HDR/SDR are an important consideration that must be accounted for. And for that matter, the OS needs a means of reliably telling (or being told) when it has HDR content.

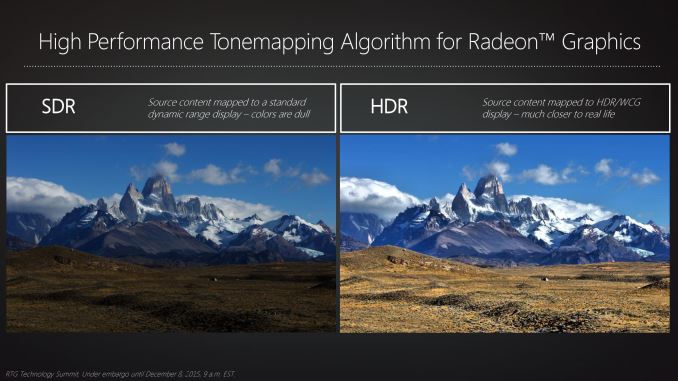

Finally at the other end of the spectrum will be software developers. While the movie/TV industries have already laid the groundwork for HDR production, software and game developers will be in a period of catching up as most current engines implicitly assume that they’ll be rendering for a SDR display. This means at a minimum reducing/removing the step in the rendering process where a scene is tonemapped for an SDR display, but there will also be some cases where rendering algorithms need to be changed entirely to make best use of the larger color space and greater dynamic range. RTG for their part seems to be eager to work with developers through their dev relations program to give them the tools they need (such as HDR tonemapping) to do just that.

Wrapping things up, RTG expects that we’ll start to see HDR capable displays in the mass market in 2016. At this point in time there is some doubt over whether this will include PC displays right away, in which case there may be a transition period of “EDR” displays that offer 10bpc and better contrast ratios than traditional LCDs, but can’t hit the 1000+ nit brightness that HDR really asks for. Though regardless of the display situation, AMD expects to be rolling out their formal support for HDR in 2016.

99 Comments

View All Comments

Azix - Wednesday, December 9, 2015 - link

GCN is just a name. It does not mean there aren't major improvements. Nvidia constantly changing their architecture name is not necessarily an indication its better, its usually the same improvements over an older arch.Also it seems AMD is ahead of the game with GCN and nvidia is playing catchup, having to cut certain things out to keep up.

BurntMyBacon - Thursday, December 10, 2015 - link

@Azix: "Also it seems AMD is ahead of the game with GCN and nvidia is playing catchup, having to cut certain things out to keep up."I wouldn't go that far. nVidia is simply focused differently that ATi at the moment. ATi gave up compute performance for gaming back in the 6xxx series and brought compute performance back with the 7xxx series. Given AMD's HSA initiative, I doubt we'll see them make that sacrifice again.

nVidia on the other hand decided to do something similar going from Fermi to little Kepler (6xx series). They brought compute back to some extent for big Kepler (high end 7xx series), but dropped it again for Maxwell. This approach does make some sense as the majority of the market at the moment doesn't really care about DP compute. The ones that do can get a Titan, a Quadro, or if the pattern holds, a somewhat more DP capable consumer grade card once every other generation. On the other hand, dropping the DP compute hardware allows them to more significantly increase gaming performance at similar power levels on the same process. In a sense, this means the gamer isn't paying as much for hardware that is of little or no use to gaming.

At the end of the day, it is nVidia that seems to be ahead in the here and now, though not by as much as some suggest. It is possible that ATi is ahead when it comes to DX12 gaming and their cards may age more gracefully than Maxwell, but that remains to be seen. More important will be where the tech lays out when DX12 games are common. Even then, I don't think that Maxwell with have as much of a problem as some fear given that DX11 will still be an option.

Yorgos - Thursday, December 10, 2015 - link

What are the improvements that Maxwell offer?They can run better the binary blobs from crapworks?

e.g. lightning effects http://oi64.tinypic.com/2mn2ds3.jpg

or efficiency

http://www.overclock.net/t/1497172/did-you-know-th...

or 3.5 GB vram,

or obsolete architecture for the current generation of games(which has already started)

Unless you have money to waste, there is no other option in the GPU segment.

a lot of GTX 700 series owners say so.(amongst others)

Michael Bay - Thursday, December 10, 2015 - link

I love how you convenienty forgot to mention not turning your case into a working oven, and then grasped sadly for muh 3.5 GBs as if it matters in the real world with overwhelming 1080p everywhere.And then there is an icing on a cake in the form of hopeless wail on "current generation of games(which has already started)". You sorry amdheads really don`t see irony even if it hits you in the face.

slickr - Friday, December 11, 2015 - link

Nvidia still pretty much uses the same techniques/technology as they had in their old 600 series graphics. Just because they've names the architecture differently doesn't mean it is.AMD's major architectural change will be in 2016 when they move to 14nm FinFet, so will Nvidia's when they move to 16nm FinFet.

AMD already has design elements like HBM in their current GCN Fiji architecture that they can more easily implement for their new GPU's which are supposed to start arriving in late Q2 2016.

Zarniw00p - Tuesday, December 8, 2015 - link

FS-HDMI for game consoles, DP 1.3 for Apple who would like to update their Mac Pros with DP 1.3 and 5k displays.Jtaylor1986 - Tuesday, December 8, 2015 - link

This is a bit strange since almost the entire presentation depends on action by the display manufacturer industry and industry standard groups. I look forward to them announcing what they are doing in 2016, not what they are trying to get the rest of the industry to do in 2016.bug77 - Tuesday, December 8, 2015 - link

Well, most of the stuff a video card does depends on action by the display manufacturer...BurntMyBacon - Thursday, December 10, 2015 - link

@Jtaylor1986: "This is a bit strange since almost the entire presentation depends on action by the display manufacturer industry and industry standard groups."That's how it works when you want a non-proprietary solution that allows your competitors to use it as well. ATi doesn't want to give away their tech any more than nVidia does. However, they also realize that Intel is the dominant graphics manufacturer in the market. If they can get Intel on board with a technology, then there is some assurance that the money invested isn't wasted.

@Jtaylor1986: "I look forward to them announcing what they are doing in 2016, not what they are trying to get the rest of the industry to do in 2016."

Point of interest: It is hard to get competitors to work together. They don't just come together and do it. Getting Acer, LG, and Samsung to standardize on a tech (particularly a proprietary one) means that there has already been significant effort invested in the tech. Also, getting Mstar, Novatek, and Realtek to make compatible hardware is similar to getting nVidia and ATi or AMD and Intel to do the same. IBM forced Intel's hand. Microsoft's DirectX standard forced ATi and nVidia (and 3DFX for that matter) to work in a compatible way.

Beyond that, it isn't as if ATi has is doing nothing. It is simply that their work requires cooperation for all parties involved. Cooperation that they've apparently obtained. This is what is required when you think about the experience beyond just your hardware. nVidia does similar with their Tesla partners, The Way it's Meant to be Played program, and CUDA support. Sure, they built the chip themselves with gsync, but they still had to get support from monitor manufacturers to get the chip into a monitor.

TelstarTOS - Thursday, December 10, 2015 - link

and the display industry has been EXTREMELY slow at producing BIG, quality display, not even counting 120 and 144hz. I dont see them embracing HDR (10-12 bit panels) any soon :(My monitor bought last year is going to last awhile, until they catch up with the video card makers.