The AMD Radeon RX 480 Preview: Polaris Makes Its Mainstream Mark

by Ryan Smith on June 29, 2016 9:00 AM ESTAMD's Path to Polaris

With the benefit of hindsight, I think in reflection that the 28nm generation started out better for AMD than it ended. The first Graphics Core Next card, Radeon HD 7970, had the advantage of launching more than a quarter before NVIDIA’s competing Kepler cards. And while AMD trailed in power efficiency from the start, at least for a time there they could compete for the top spot in the market with products such as the Radeon HD 7970 GHz Edition, before NVIDIA rolled out their largest Kepler GPUs.

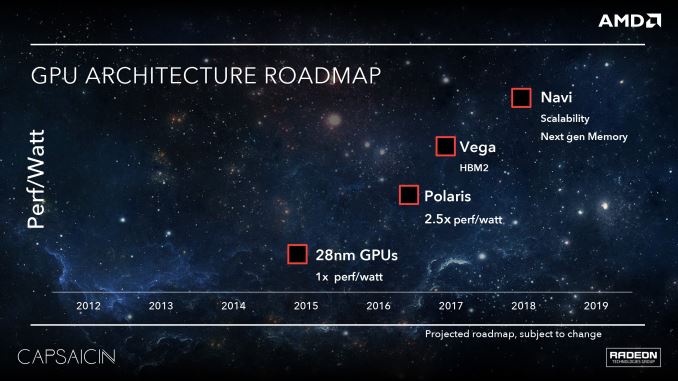

However I think where things really went off of the rails for AMD was mid-cycle, in 2014, when NVIDIA unveiled the Maxwell architecture. Kepler was good, but Maxwell was great; NVIDIA further improved their architectural and energy efficiency (at times immensely so), and this put AMD on the back foot for the rest of the generation. AMD had performant parts from the bottom R7 360 right up to the top Fury X, but they were never in a position to catch Maxwell’s efficiency, a quality that proved to resonate with both reviewers and gamers.

The lessons of the 28nm generation were not lost on AMD. Graphics Core Next was a solid architecture and opened the door to AMD in a number of ways, but the Radeon brand does not exist in a vacuum, and it needs to compete with the more successful NVIDIA. At the same time AMD is nothing if not scrappy, and they can surprise us when we least expect it. But sometimes the only way to learn is the hard way, and for AMD I think the latter half of the 28nm generation was for the Radeon Technologies Group learning the hard way.

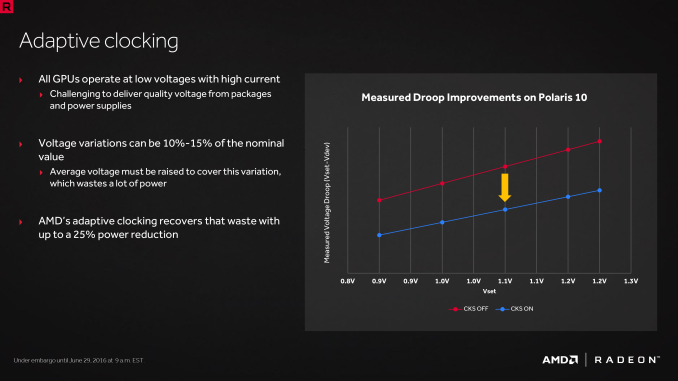

So what lessons did AMD learn for Polaris? First and foremost, power efficiency matters. It matters quite a lot in fact. Every vendor – be it AMD, Intel, or NVIDIA – will play up their strongest attributes. But power efficiency caught on with consumers, more so than any other “feature” in the 28nm generation. Though its importance in the desktop market is forum argument fodder to this day, power efficiency and overall performance are two sides of the same coin. There are practical limits for how much power can be dissipated in different card form factors, so the greater the efficiency, the greater the performance at a specific form factor. This aspect is even more important in the notebook space, where GPUs are at the mercy of limited cooling and there is a hard ceiling on heat dissipation.

As a result a significant amount of the work that has gone into Polaris has been into improving power efficiency. To be blunt, AMD has to be able to better compete with NVIDIA here, but AMD’s position is more nuanced than simply beating NVIDIA. AMD largely missed the boat on notebooks in the last generation, and they don’t want to repeat their mistakes. At the same time, starting now with an energy efficient architecture means that when they scale up and scale out with bigger and faster chips, they have a solid base to work from, and ultimately, more chances to achieve better performance.

The other lesson AMD learned for Polaris is that market share matters. This is not an end-user problem – AMD’s market share doesn’t change the performance or value of their cards – but we can’t talk about what led to Polaris without addressing it. AMD’s share of the consumer GPU market is about as low as it ever has been; this translates not only into weaker sales, but it undermines AMD’s position as a whole. Consumers are more likely to buy what’s safe, and OEMs aren’t much different, never mind the psychological aspects of the bandwagon effect.

Consequently, with Polaris AMD made the decision to start with the mainstream market and then work up from there, a significant departure from the traditional top-down GPU rollouts. This means developing chips like Polaris 10 and 11 first, targeting mainstream desktops and laptops, and letting the larger enthusiast class GPUs follow. The potential payoff for AMD here is that this is the opposite of what NVIDIA has done, and that means AMD gets to go after the high volume mainstream market first while NVIDIA builds down. Should everything go according to plan, then this gives AMD the opportunity to grow out their market share, and ultimately shore up their business.

As we dive into Polaris, its abilities, and its performance, it’s these two lessons we’ll see crop up time and time again, as these were some of the guiding lessons in Polaris’s design. AMD has taken the lessons of the 28nm generation to heart and have crafted a plan to move forward with the FinFET generation, charting a different, and hopefully more successful path.

Though with this talk of energy efficiency and mainstream GPUs, let’s be clear here: this isn’t AMD’s small die strategy reborn. AMD has already announced their Vega architecture, which will follow up on the work done by Polaris. Though not explicitly stated by AMD, it has been strongly hinted at that these are the higher performance chips that in past generations we’d see AMD launch with first, offering performance features such as HBM2. AMD will have to live with the fact that for the near future they have no shot at the performance crown – and the halo effect that comes with it – but with any luck, it will put AMD in a better position to strike at the high-end market once Vega’s time does come.

449 Comments

View All Comments

fanofanand - Thursday, June 30, 2016 - link

Amazing that you have managed to purchase a card no one has for sale. Not Newegg, not Amazon, go ahead and tell us all where you were able to procure an unreleased card?catavalon21 - Wednesday, July 13, 2016 - link

"2 RX 480s probably beat a single 1070 while consuming twice the power"Actually, very close to double the power in a particular gaming selection (87% more was their assessment)

http://www.hardocp.com/article/2016/07/11/amd_rade...

slickr - Thursday, June 30, 2016 - link

The only people who care and cared about power consumption, whether its 150W or 170W were/are the paid and bought for shills who write for Nvidia and who commit fraud and scam against their readers, they are committing crimes.Nvidia won because of the shills.

D. Lister - Thursday, June 30, 2016 - link

You get it man, you've got it all figured out! Yours is the level of comprehension that lesser men like myself strive for all their lives, and still fall short by miles. To hell with trivialities like hardware components, a mind like yours should be tackling questions like "the meaning of life" and whatnot.fanofanand - Thursday, June 30, 2016 - link

A+ on the hyperbole (is your name an homage to Dean Lister, the MMA fighter?) but his original statement isn't entirely wrong. Few desktop users will notice the difference between 150 watts and 170 watts. I don't know about the tinfoil hat stuff, but the original premise isn't completely invalid.D. Lister - Friday, July 1, 2016 - link

"is your name an homage to Dean Lister, the MMA fighter?"Actually it is an homage to "David 'Dave' Lister", from the "Red Dwarf" books. :)

"Few desktop users will notice the difference between 150 watts and 170 watts. I don't know about the tinfoil hat stuff, but the original premise isn't completely invalid."

The thing is, no one terribly cares for the 480 slightly overshooting the TDP. The thing they're complaining about is AMD's choice to stick on a 6-pin connector on the ref card, instead of an 8-pin one, which forces the card to compensate by over-drawing from its slot. Nonetheless, I was really more concerned about the tinfoil hat stuff.

andrewaggb - Thursday, June 30, 2016 - link

Nah, power consumption is a big deal to some people. I agree that 150W vs 170W is nothing, but pulling an extra 100W or more for the same performance isn't great. It's heat that ends up in the room with you, it's bigger heatsinks or faster fans etc.In laptops power consumption is everything. AMD needs to get it under control, simple as that. I think the RX 480 and 470 will be fine, but if they are already pulling 1070 numbers what will Vega pull? It'll probably use HBM2 and get power savings there, but what about the GPU itself?

jayfang - Thursday, June 30, 2016 - link

Nice, something that makes sense to replace my HD 6850 with.Intel / AMD please step up - still rocking i5-2500K

drgigolo - Thursday, June 30, 2016 - link

Where is the GTX 1080 review? Or 1070 for that matter.cocochanel - Thursday, June 30, 2016 - link

You didn't read all the comments. It's coming out in a few days.