Intel to Launch Next-Gen Sapphire Rapids Xeon with High Bandwidth Memory

by Dr. Ian Cutress on June 28, 2021 12:00 PM EST- Posted in

- CPUs

- Intel

- Xeon

- HBM

- Xeon Scalable

- Sapphire Rapids

- SPR-HBM

As part of today’s International Supercomputing 2021 (ISC) announcements, Intel is showcasing that it will be launching a version of its upcoming Sapphire Rapids (SPR) Xeon Scalable processor with high-bandwidth memory (HBM). This version of SPR-HBM will come later in 2022, after the main launch of Sapphire Rapids, and Intel has stated that it will be part of its general availability offering to all, rather than a vendor-specific implementation.

Hitting a Memory Bandwidth Limit

As core counts have increased in the server processor space, the designers of these processors have to ensure that there is enough data for the cores to enable peak performance. This means developing large fast caches per core so enough data is close by at high speed, there are high bandwidth interconnects inside the processor to shuttle data around, and there is enough main memory bandwidth from data stores located off the processor.

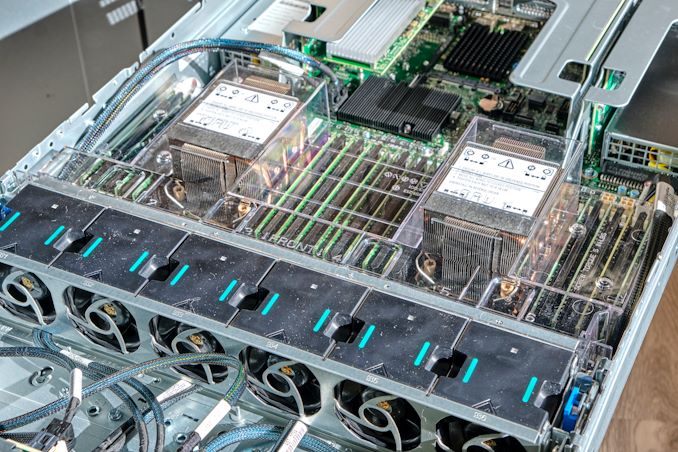

Our Ice Lake Xeon Review system with 32 DDR4-3200 Slots

Here at AnandTech, we have been asking processor vendors about this last point, about main memory, for a while. There is only so much bandwidth that can be achieved by continually adding DDR4 (and soon to be DDR5) memory channels. Current eight-channel DDR4-3200 memory designs, for example, have a theoretical maximum of 204.8 gigabytes per second, which pales in comparison to GPUs which quote 1000 gigabytes per second or more. GPUs are able to achieve higher bandwidths because they use GDDR, soldered onto the board, which allows for tighter tolerances at the expense of a modular design. Very few main processors for servers have ever had main memory be integrated at such a level.

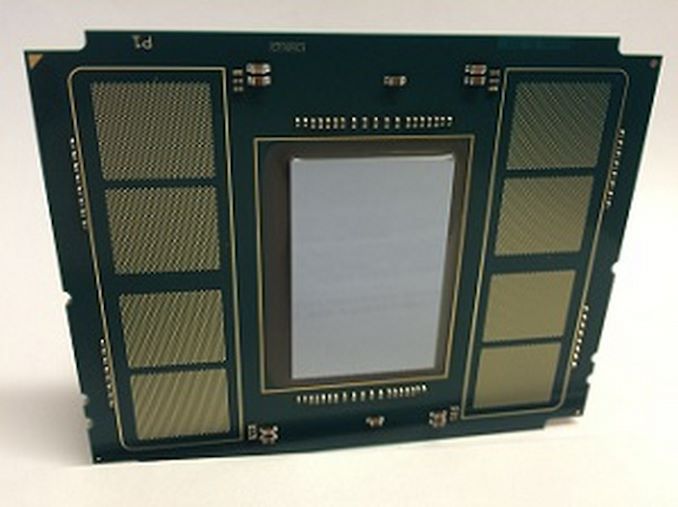

Intel Xeon Phi 'KNL' with 8 MCDRAM Pads in 2015

One of the processors that used to be built with integrated memory was Intel’s Xeon Phi, a product discontinued a couple of years ago. The basis of the Xeon Phi design was lots of vector compute, controlled by up to 72 basic cores, but paired with 8-16 GB of on-board ‘MCDRAM’, connected via 4-8 on-board chiplets in the package. This allowed for 400 gigabytes per second of cache or addressable memory, paired with 384 GB of main memory at 102 gigabytes per second. However, since Xeon Phi was discontinued, no main server processor (at least for x86) announced to the public has had this sort of configuration.

New Sapphire Rapids with High-Bandwidth Memory

Until next year, that is. Intel’s new Sapphire Rapids Xeon Scalable with High-Bandwidth Memory (SPR-HBM) will be coming to market. Rather than hide it away for use with one particular hyperscaler, Intel has stated to AnandTech that they are committed to making HBM-enabled Sapphire Rapids available to all enterprise customers and server vendors as well. These versions will come out after the main Sapphire Rapids launch, and entertain some interesting configurations. We understand that this means SPR-HBM will be available in a socketed configuration.

Intel states that SPR-HBM can be used with standard DDR5, offering an additional tier in memory caching. The HBM can be addressed directly or left as an automatic cache we understand, which would be very similar to how Intel's Xeon Phi processors could access their high bandwidth memory.

Alternatively, SPR-HBM can work without any DDR5 at all. This reduces the physical footprint of the processor, allowing for a denser design in compute-dense servers that do not rely much on memory capacity (these customers were already asking for quad-channel design optimizations anyway).

The amount of memory was not disclosed, nor the bandwidth or the technology. At the very least, we expect the equivalent of up to 8-Hi stacks of HBM2e, up to 16GB each, with 1-4 stacks onboard leading to 64 GB of HBM. At a theoretical top speed of 460 GB/s per stack, this would mean 1840 GB/s of bandwidth, although we can imagine something more akin to 1 TB/s for yield and power which would still give a sizeable uplift. Depending on demand, Intel may fill out different versions of the memory into different processor options.

One of the key elements to consider here is that on-package memory will have an associated power cost within the package. So for every watt that the HBM requires inside the package, that is one less watt for computational performance on the CPU cores. That being said, server processors often do not push the boundaries on peak frequencies, instead opting for a more efficient power/frequency point and scaling the cores. However HBM in this regard is a tradeoff - if HBM were to take 10-20W per stack, four stacks would easily eat into the power budget for the processor (and that power budget has to be managed with additional controllers and power delivery, adding complexity and cost).

One thing that was confusing about Intel’s presentation, and I asked about this but my question was ignored during the virtual briefing, is that Intel keeps putting out different package images of Sapphire Rapids. In the briefing deck for this announcement, there was already two variants. The one above (which actually looks like an elongated Xe-HP package that someone put a logo on) and this one (which is more square and has different notches):

There have been some unconfirmed leaks online showcasing SPR in a third different package, making it all confusing.

Sapphire Rapids: What We Know

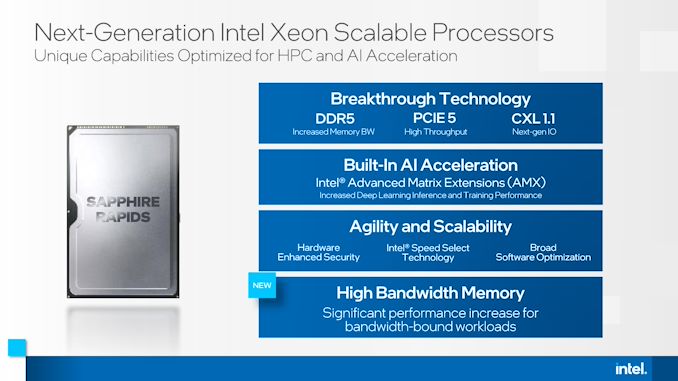

Intel has been teasing Sapphire Rapids for almost two years as the successor to its Ice Lake Xeon Scalable family of processors. Built on 10nm Enhanced SuperFin, SPR will be Intel’s first processors to use DDR5 memory, have PCIe 5 connectivity, and support CXL 1.1 for next-generation connections. Also on memory, Intel has stated that Sapphire Rapids will support Crow Pass, the next generation of Intel Optane memory.

For core technology, Intel (re)confirmed that Sapphire Rapids will be using Golden Cove cores as part of its design. Golden Cove will be central to Intel's Alder Lake consumer processor later this year, however Intel was quick to point out that Sapphire Rapids will offer a ‘server-optimized’ configuration of the core. Intel has done this in the past with both its Skylake Xeon and Ice Lake Xeon processors wherein the server variant often has a different L2/L3 cache structure than the consumer processors, as well as a different interconnect (ring vs mesh, mesh on servers).

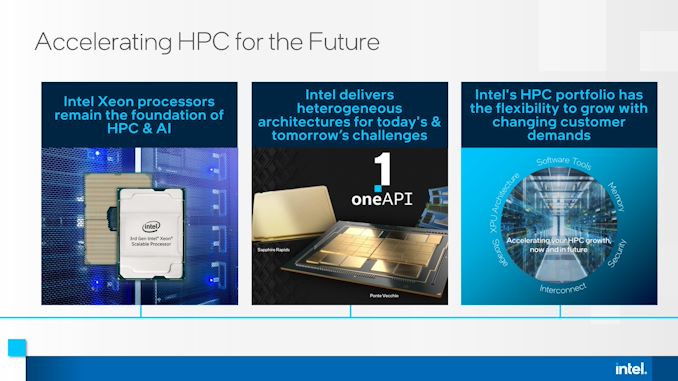

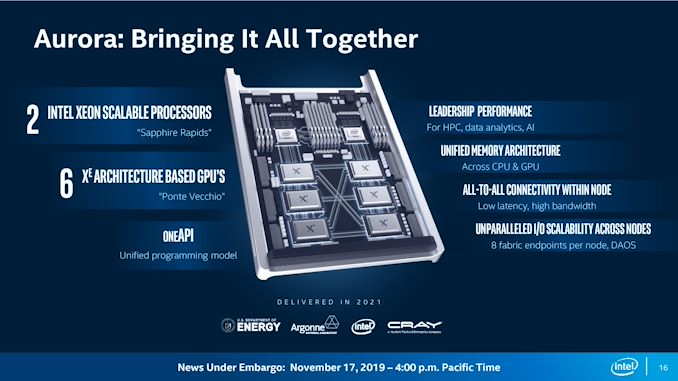

Sapphire Rapids will be the core processor at the heart of the Aurora supercomputer at Argonne National Labs, where two SPR processors will be paired with six Intel Ponte Vecchio accelerators, which will also be new to the market. Today's announcement confirms that Aurora will be using the SPR-HBM version of Sapphire Rapids.

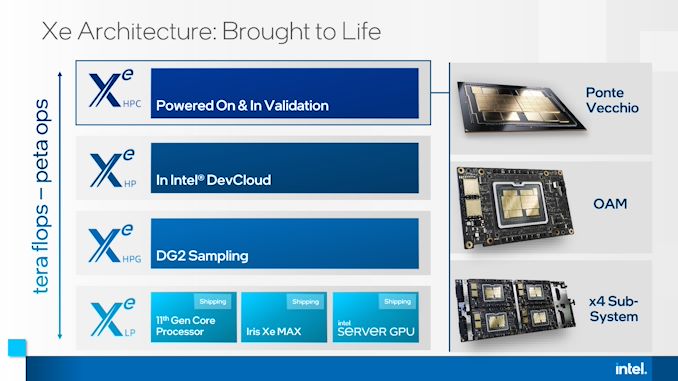

As part of this announcement today, Intel also stated that Ponte Vecchio will be widely available, in OAM and 4x dense form factors:

Sapphire Rapids will also be the first Intel processors to support Advanced Matrix Extensions (AMX), which we understand to help accelerate matrix heavy workflows such as machine learning alongside also having BFloat16 support. This will be paired with updates to Intel’s DL Boost software and OneAPI support. As Intel processors are still very popular for machine learning, especially training, Intel wants to capitalize on any future growth in this market with Sapphire Rapids. SPR will also be updated with Intel’s latest hardware based security.

It is highly anticipated that Sapphire Rapids will also be Intel’s first multi compute-die Xeon where the silicon is designed to be integrated (we’re not counting Cascade Lake-AP Hybrids), and there are unconfirmed leaks to suggest this is the case, however nothing that Intel has yet verified.

The Aurora supercomputer is expected to be delivered by the end of 2021, and is anticipated to not only be the first official deployment of Sapphire Rapids, but also SPR-HBM. We expect a full launch of the platform sometime in the first half of 2022, with general availability soon after. The exact launch of SPR-HBM beyond HPC workloads is unknown, however given those time frames, Q4 2022 seems fairly reasonable depending on how aggressive Intel wants to attack the launch in light of any competition from other x86 vendors or Arm vendors. Even with SPR-HBM being offered to everyone, Intel may decide to prioritize key HPC customers over general availability.

Related Reading

- SuperComputing 15: Intel’s Knights Landing / Xeon Phi Silicon on Display

- A Few Notes on Intel’s Knights Landing and MCDRAM Modes from SC15

- Intel Announces Knights Mill: A Xeon Phi For Deep Learning

- Intel Begins EOL Plan for Xeon Phi 7200-Series ‘Knights Landing’ Host Processors

- Knights Mill Spotted at Supercomputing

- The Larrabee Chapter Closes: Intel's Final Xeon Phi Processors Now in EOL

- Intel’s 2021 Exascale Vision in Aurora: Two Sapphire Rapids CPUs with Six Ponte Vecchio GPUs

- Intel’s Xeon & Xe Compute Accelerators to Power Aurora Exascale Supercomputer

- Hot Chips 33 (2021) Schedule Announced: Alder Lake, IBM Z, Sapphire Rapids, Ponte Vecchio

- Intel’s Full Enterprise Portfolio: An Interview with VP of Xeon, Lisa Spelman

- What Products Use Intel 10nm? SuperFin and 10++ Demystified

- Intel 3rd Gen Xeon Scalable (Ice Lake SP) Review: Generationally Big, Competitively Small

149 Comments

View All Comments

Silver5urfer - Sunday, July 4, 2021 - link

What is this wall of text lol.Let me tell one thing. Do you think AMD doesn't know what is happening in the industry ? Esp when Lisa Su showcased 5900X with V-Cache out in public..Intel doesn't have any damn hardware that showcases they beat AMD let that sink in first.

Next is, TSMC 3nm is not going to be released in 2022. What are you smoking man ? Samsung is facing issues with GAAFET 3nm node, just to think what is this TSMC 3nm we do not know yet, and how much it varies with 5nm we do not know. No high powered silicon has been made on TSMC 5nm yet, and jump to 3nm ?

Intel is just securing orders, I believe those are Intel Xe first then CPUs, Intel to date since decades never made their processors on TSMC or any other foundries, making them work without issues and ability to beat AMD who are crushing Intel since 3 years in DC market it's not a simple hey we pay BIG checks and we won the damn game lol. Do you think it's that easy eh.

Sapphire Rapids HEDT is coming in 2022 which is on 10nm+ this 3nm magic aint coming. By that time Zen 4 AM5 is going to crush Intel, ADL is already dead with 3D V-Cache Zen, proof is EVGA making X570 in 2021 Q3, almost end of cycle. But they are making it because they know AMD is going to whack Intel hard. Also let Zen 3 come, HEDT Sapphire Rapids, will definitely lose to that lol. As for Zen 4 based DC processor, it's 80+ cores, Intel is not going to beat them any time soon.

Qasar - Monday, July 5, 2021 - link

" What is this wall of text lol. " come one silver5urfer, you know exactly what this is, the usual anti amd BS rant from the jian, what else is it ?Oxford Guy - Monday, July 5, 2021 - link

The console scam certainly isn’t helping AMD right now.Go ahead, AMD... compete against PC gamers after shoveling out garbage releases like Frontier and Radeon VI — after peddling ‘Polaris forever’ so Nvidia could, with the help of AMD’s pals MS and Sony, artificially (via nearly a monopoly) inflate ‘PC’ GPU prices (and, of course, half-hearted shovelware garbage like Radeon VI, 590, Frontier, and Vega). Vega had identical IPC versus Fury X.

AMD deserves a good rant, regardless of how lackluster Intel has been (very). Of course, we plebeians get the best quasi-monopoly trash the first world can conjure. More duopolies/oligarchies in tech, please.

mode_13h - Monday, July 5, 2021 - link

> The console scamWhat is this "scam"? And please post some evidence.

What's funny about your post is that this thread isn't even about AMD GPUs. It sounds like you're still sore from the pre-RDNA era, but we should be looking ahead to RDNA3 -- not behind, to Vega and Polaris. By the time Sapphire Rapids reaches the public, that's the generation AMD should be on.

> More duopolies/oligarchies in tech, please.

Odd timing for such a comment, when ARM is ascendant and RISC-V is finally starting to grow some legs.

Qasar - Monday, July 5, 2021 - link

mode 13, he wont post any proof, as there is none, its is own opinion.almost could add most of his posts as anti amd bs as well.

Oxford Guy - Monday, July 5, 2021 - link

An ad hom and a false claim in addition to it.I have written extensively wherein the irrefutable elementary logic of the situation has been explained.

AMD, Sony, Microsoft, Nvidia, and Nintendo all compete against the PC gaming platform. That’s your first clue.

As for opinions, you can try to use that word pejoratively but opinions are essential for understanding the world in a rational factual manner. Moreover, opinion has nothing to do with the fact that the logic and facts I have presented have never, not once, been rebutted substantively. Posting emojis and ad homs doesn’t cut it.

mode_13h - Wednesday, July 7, 2021 - link

> AMD, Sony, Microsoft, Nvidia, and Nintendo all compete against the PC gaming platform.It's not like that. It's like AMD and Nvidia are pioneering the high-end, cutting-edge stuff on the PC, where there's demand for the highest performance, newest features, and people willing to pay for it. Since most gamers are more budget-constrained, consoles bring most of those benefits to a more accessible price point that's also more consumer-friendly.

I wonder if maybe you don't understand the concept of market tiers.

Oxford Guy - Monday, July 5, 2021 - link

I have posted extensively about the scam and the nature of it.It’s abundantly clear that responses, which have included ad homs, emojis, false claims (including the hilarious claim that it’s easier to create the Google empire from scratch than to create a 3rd GPU player that’s actually serious about selling quality hardware to PC gamers versus spending more time developing machinations to keep prices artificially high — zero substantive rebuttal — that I’m unlikely to find much discourse on the subject.

Many very large monied interests want to keep the scam going via favorable posts (e.g. stonewalling) and refusal to challenge status quo thinking is also par for the course. Samsung was caught for hiring astroturfers. Judging by the refusal to engage me substantively on this subject that doesn’t surprise. Even without a paycheck being involved, though, it is clearly emotionally satisfying to critique without doing any good-faith work.

Instead of the emojis, ad homs, and lazy false claims — it would be amazing to see someone put forth the effort to explain, in detail, how the existence of the ‘consoles’ as they are today and have been since the start of the Jaguar-based era are not more harmful to the PC gamer’s wallet and more parasitic on the industry as a whole. Explain how having four artificial walled software gardens is in the interest of the consumer, particularly in light of how everything has changed to run the same hardware platform. Explain how having wafers allocated to ‘consoles’ isn’t a way to undermine the competitiveness of the PC gaming platform, keeping prices high by creating demand artificially for the ‘consoles’. Explain how having just one 1/2 companies producing PC gaming GPUs (since AMD has been doing a half-hearted effort for years and sandbagging doesn’t disprove my point at all) has led to a healthy PC gaming platform rather than one that falls prey to mining, wafer unavailability, lack of competitiveness, etc. None of this is difficult to comprehend and there is plenty more to point out.

Suffice to say that Linux with Open GL, Vulkan, and the ITX form factor in particular make consoles put parasitic redundancy. It’s so obvious that apparently no one can be bothered to see it.

Oxford Guy - Monday, July 5, 2021 - link

‘Pure’, not ‘put’. Typing on this phone, with its aggressive auto-defect, is enough to put me in a home.Oxford Guy - Monday, July 5, 2021 - link

And don’t forgot all the years of selling GPUs designed for mining as if they’re PC gaming parts. It just goes on and on... all these too-obvious examples.